RealityServer 6.1 is here and there are lots of great new features to go through. Top of the list are the newly rewritten glTF 2.0 importer, with support for all of the latest extensions and the brand new USD importer. There are also several quality of life improvements and a new Iray release with support for the latest GPUs and scene attribute data for MDL.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

In this article I am going to show you how add light sources to your RealityServer scene using the V8 server-side JavaScript API. You will learn how to add several different types of lights, including a photometric light using an IES data file, an area light, a spot light and daylight. This will be a very simple example but will give you all of the pieces you need to programmatically add lighting to your scene. You can expand on the concepts shown here to make different types of lighting very easily.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

This is a big one! We’ve been in beta for a while so some of our advanced users have already had a chance to check out the great new features in RealityServer 6.0. Some headline items are a new fibers primitive, matte fog, toon post-processing, sheen BSDF, V8 engine version bump, V8 command debugging and much more. Checkout the full article for details.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

With the Sayduck Platfrom users can create, manage and publish engaging visuals for their products in both 3D and Augmented Reality. Leading design brands such as Alessi, Kristalia and Vallila Interior trust the platform to create accurate representations of their products every day. We spoke with Niklas Slotte from Sayduck about adding true photorealistic rendering to an already great platform and why they chose RealityServer to get the job done.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

Last month at NVIDIA GTC Digital 2020 migenius had the pleasure of presenting along side our long term customer Amazon on the concept of Visuals as a Service. This presentation is now available online and if you would like to see what several industry leading companies are already doing with RealityServer be sure to check it out.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

We’ve had quite a few customers express interest in downloading content from AWS S3 rather than persistently storing it on their RealityServer instances. While this introduces latency, in many use cases it can still make a lot of sense. Our recently released HTTP Request functionality for V8 makes it easy to download content from public URLs. What do you do if your S3 buckets require authentication though? Let’s dive in.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

This is the seventh update of RealityServer 5.3 since it was released in May. That’s a new release every month folks! While this post will focus on the features added in the most recent, 2593.276 build, we’ll also recap some of the other features added along the way in previous updates. The headline item for this new release is the ability to make HTTP requests from within the server-side V8 JavaScript API. This has been a long standing request from users and we are finally able to ship this feature to you. There’s also some cool new glTF features and much more.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

Customers of RealityServer are usually seeking out the highest quality visuals possible. However in many contexts it is not economical to provide this quality at all times. As a result, hybrid solutions using both WebGL and RealityServer have become popular. Here, WebGL is used during most of the interaction and RealityServer just at the end of the process for a final high quality image. The problem this creates is that you have to make the WebGL version of the content somehow, ideally without making your content twice. In this article we’ll see how glTF 2.0, MDL materials and distillation let you repurpose your content automatically.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

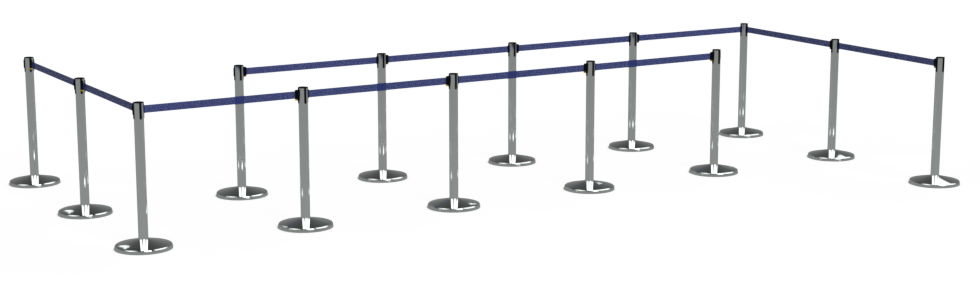

Even though RealityServer is great at streaming fully interactive, server-side rendering directly to your browser, not every use case requires this level of interactivity. RealityServer has recently introduced a new feature called the Queue Manager which integrates with popular message queue services to manage rendering and other RealityServer tasks. In this article we will dive into the details on how to get up and running with this great new feature using Amazon SQS and S3 services.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

RealityServer is a platform for integrating photorealistic 3D rendering into your application. It is based on a web services methodology and can be used both for rendering automation and fully interactive rendering. In this introduction we will cover the core concepts of RealityServer development and what you need to understand in order to get started.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

The legacy JavaScript client library shipped with RealityServer was designed in 2010, back when JavaScript in the browser was mostly unusable without some sort of framework like jQuery, WebSockets were still under design and JavaScript on the server was virtually unheard of. A lot has changed since then, JavaScript has significantly evolved and is supported by a huge module ecosystem, WebSockets are under the hood of everything and Node.js is becoming ubiquitous. The RealityServer client library however has barely changed. Until now.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

So you’ve got your RealityServer release email and downloaded everything but how do you install it? This article will take you through the steps in setting up RealityServer on Windows or Linux as well and also provide an overview of the RealityServer directory structure. We’ll also cover how to setup your licensing.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

Continuing our series of articles on using the server-side V8 API we will now add some objects, loaded from a model file to the scene that we setup and lit in our previous articles. This one is going to be very simple but for bonus points we’ll add multiple copies of the object in different locations.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

In a previous post we covered creating an empty scene by writing a server-side V8 command in JavaScript. Now let’s turn on some light by adding an environment lighting setup to our empty scene. We’ll create two new commands, one to add lighting based on a spherical HDRI image and another using the built in physically based sun and sky system.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

migenius has now joined the Khronos Group as an Associate Member. The Khronos Group has helped shape several key standards that are used today and that are actively used in our products. In particular glTF 2.0 which has now been fully supported by RealityServer for some time. With the advent of the 3D Commerce Working Group we have joined to help represent the needs of our customers. We are looking forward to some great collaboration.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

RealityServer with support for NVIDIA RTX technology is here! This release includes a major Iray version bump which adds support for accelerating Iray rendering with the new RT Core hardware inside RTX cards and the Tesla T4. There is also a great performance improvement for Iray Interactive (on all cards) and support for MDL 1.5. Let’s take a look.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

NVIDIA RTX technology was announced late last year and has gathered a lot of coverage in the press. Many software vendors have been scrambling to implement support for it since then and there has been a lot of speculation about what is possible with RTX. Now that Iray RTX is finally about to be part of RealityServer we can talk about what RTX means for our customers and where it will be most beneficial for you.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

Our next RealityServer update is here. This is an incremental release with quite a few requested fixes and enhancements but also contains a few great new features for heavy users of MDL materials. This will be the last RealityServer 5.2 release as we will shortly be releasing RealityServer 5.3 with NVIDIA RTX support so watch out for that one. In the meantime, let’s checkout whats new in this version.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

The Timex Group has joined the growing list of household names who use RealityServer to create the imagery that pushes customers to choose their products over a competitors. The retail sector demands the highest quality imagery in order to replace traditional photography with photorealistic 3D rendering. Not just quality, but speed and scale in everything from real-time, interactive and offline rendering.

Chris has over 20 years of direct experience and deep knowledge of 3D project management systems and high speed physical based rendering technologies. As former CEO and co-founder of Luminova, a specialist 3D visualisation and application development group, some 3,000 projects were successfully delivered to many of the world’s largest companies, over a 13 year period. Since 2011 Chris has been a partner and co-founder of migenius, the home of the cloud based RealityServer solution platform.

Our first update for RealityServer 5.2 is here. It includes an Iray version bump and some nice convenience features. The most significant feature however is support for queuing renders with Iray Server. This will be of interest to those building internal rendering automation tools with RealityServer.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

RealityServer 5.2 is here and adds some great functionality. Hugely expanded glTF 2.0 importer support, wireframe rendering, lightmap rendering, section plane capping, MDL 1.4 support, UDIM support and many more features have been added along with many fixes and smaller enhancements based on extensive customer feedback. In this post we will run through some of the most interesting functionality and how it can help you build your applications.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

We’ve covered server-side V8 commands before but in this post we will go into a little more detail and use some of the helper classes that are provided with RealityServer to make common tasks easier. Quite often you want to kick off an application by creating a valid, empty scene ready for adding your content. Actually, it’s something we we need to do in a lot of our posts here so to avoid repeating it each time, lets make a V8 command to do it for us.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

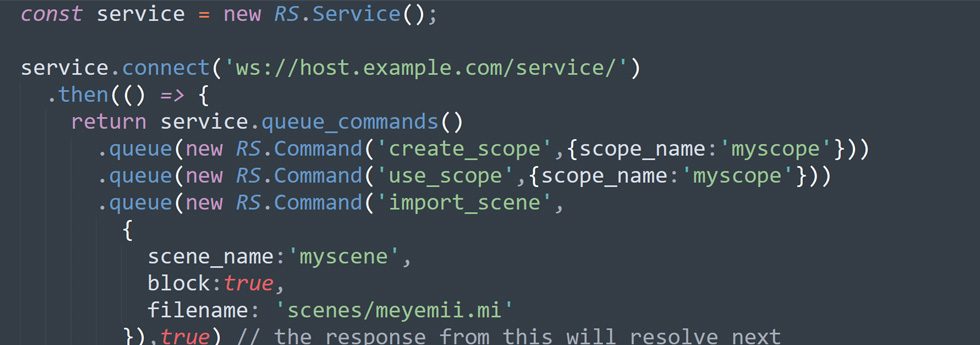

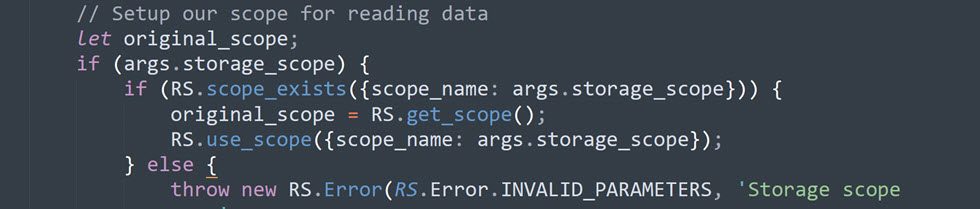

A core concept in RealityServer which many new users have some difficulty understanding is Scopes. The use of scopes is critical in making effective use of RealityServer in a production environment where multiple users or multiple independent operations are happening at once. In this article we will go into more depth on what scopes are and how to use them.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

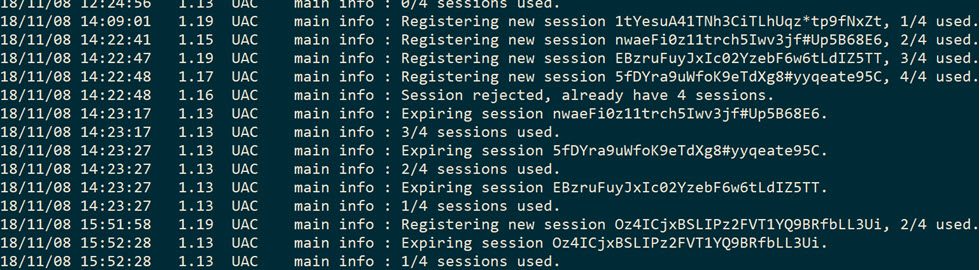

In this article we’ll take a quick look at how to use the UAC system in RealityServer to effectively manage user sessions and clean up server memory when users go away. There won’t be a lot of pretty pictures (well there is one if you make it to the end) but for those of you getting your hands dirty with RealityServer in production, you’ll get some valuable pointers to help keep your server from filling up with unused data.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

In our last post we explored using the RealityServer compositing system to produce imagery for product configurators at scale. Check out that article first if you haven’t already as it contains a great introduction to how the system works. In this follow up post we will explore the possibilities of using the same system to modify the lighting in a scene without having to re-render, allowing us to build a lighting configurator.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.