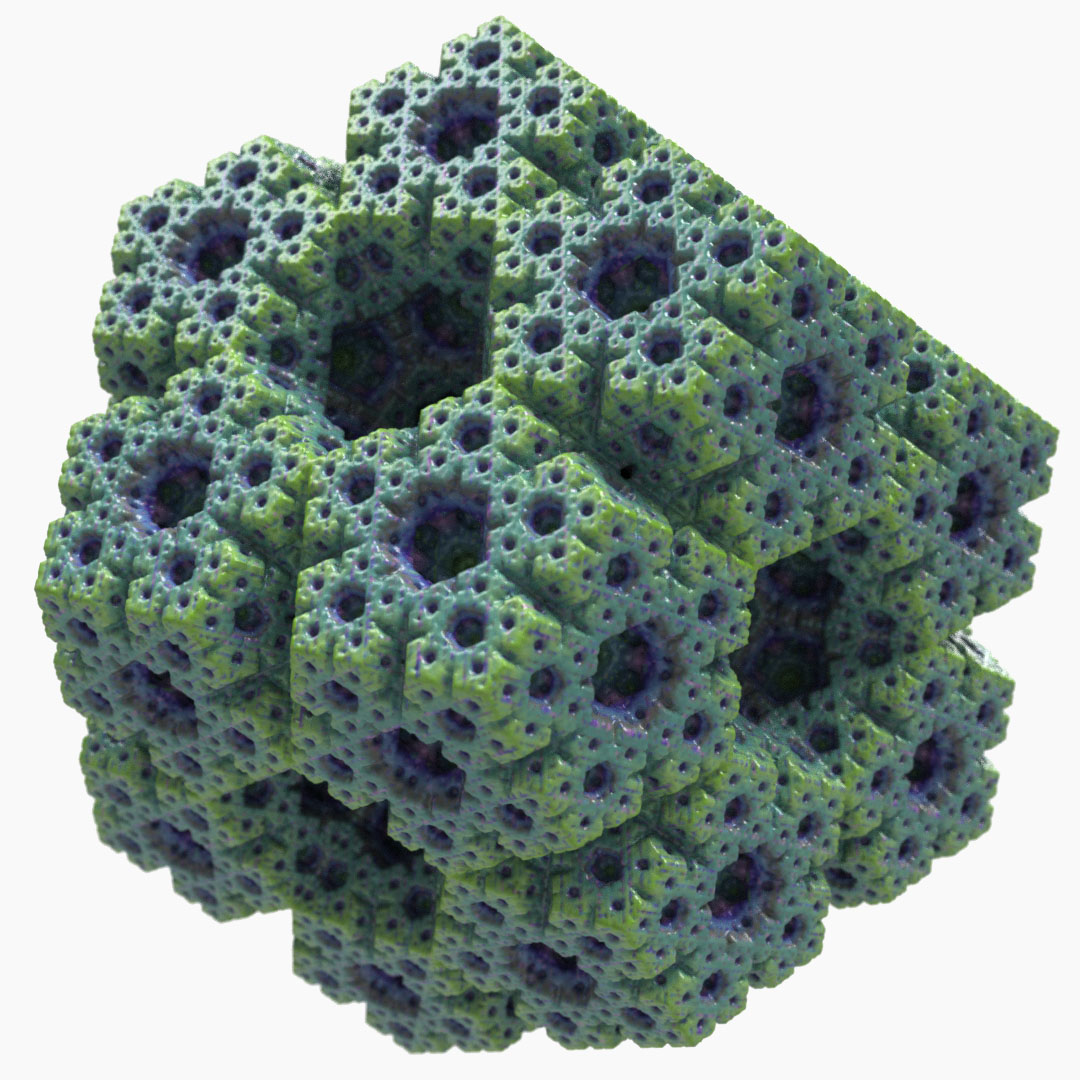

RealityServer 6.1 is here and there are lots of great new features to go through. Top of the list are the newly rewritten glTF 2.0 importer, with support for all of the latest extensions and the brand new USD importer. There are also several quality of life improvements and a new Iray release with support for the latest GPUs and scene attribute data for MDL.

In previous releases of RealityServer our glTF importer relied on the third party Assimp library. While this has served us well to this point, glTF has become such an important format that we needed more direct control over the way we import it. To that end we have re-written the glTF importer from the ground up.

Our new implementation is programmatically derived from the JSON schemas of the glTF 2.0 specifications as well as those of the various extensions we are supporting (and we support a lot more of them now). This makes it a lot easier for us to add support for new extensions in the future. This will become increasingly important as the Khronos Group rolls out new ratified extensions.

We still support everything we did in the previous importer but we have now added support for several more ratified extensions as well, further reducing the need to pre-process glTF files with unsupported extensions. The complete list of supported extensions is now as follows:

In fact the only ratified extensions we do not support are those which do not have any sensible translation to our rendering engine, KHR_materials_unlit and KHR_techniques_webgl. In addition to new extensions we now support additional core properties, including:

For the extras property which can be attached to any glTF node and can contain any valid JSON, we store the raw JSON of the property as a String attribute on the node in RealityServer. This makes it easy to pull out in your V8 commands if needed.

Vertex colours use the new MDL scene data facility (described later in this article) and will be enabled for the entire scene if any vertex colours are detected. Since this is not a commonly used feature we only add the extra lookup nodes for scenes that actually use them.

While the new glTF importer should give almost identical results to the old one in cases where new features are not used, the strategy for naming and organising the scene graph has changed substantially so if you were relying on the scene graph structure or names of nodes this is an area where you may need to change your application.

We no longer name scene elements based on the name property in the glTF file. In glTF the same name can be used on multiple nodes which would not work in RealityServer so numbered suffixes needed to be added, this also meant that you could not easily predict the name of an element directly from the structure of the glTF file as it would depend on how many nodes with the same name existed.

To address this nodes are now named using a reproducible pattern which is able to be directly derived from the glTF contents. Elements are named based on a combination of node numbers and types and instead of using the name property directly. The importer creates a String attribute gltf::name on the element with the glTF name property so you still have access to it. This restructuring is the main breaking change for existing applications.

Another new feature is the ability for RealityServer to automatically fetch remote resources referenced by http:// or https:// based URIs within glTF content. If your references are remote but referenced relative to the original location of your glTF file then you can provide an importer option giving the URI prefix to use when locating resources.

Unlike the Assimp importer the new glTF importer also emits progress information which can be useful in some contexts. There are several other issues that have also been fixed which would have been more complex to address in Assimp. We are expecting to make continuous improvements to the glTF importer so keep your eyes on the release notes.

Currently the Assimp version is still used for glTF export however we are also working on a rewrite of the glTF exporter which is expected to ship with RealityServer 6.2. The new exporter will have much greater support for round-tripping glTF content through RealityServer while retaining built in properties. You will notice that all glTF specific attributes are prefixed with gltf:: for straight forward filtering.

The Universal Scene Description or USD is a format developed by Pixar for storing 3D data which has started to gain significant traction. While used primarily in the visual effects industry, the fact that Apple has chosen a variant of the format called USDz as the standard for its AR Quick Look feature on iOS has led to broader interest.

It has also been chosen as the basis the NVIDIA Omniverse platform, a collaborative environment for 3D content creation and delivery which spans many popular 3D applications. A fantastic consequence of this is that NVIDIA has added support for MDL to the USD schema and this has been officially adopted by Pixar.

As we have seen increased customer adoption of the format we felt it was a great time to begin adding support for USD to RealityServer. With RealityServer 6.1 you can import any standard USD and USDz content, including those using the MDL schema. We utilise the standard Pixar USD libraries for this support so that we can have full control over the import process.

USD files are now available from a wide range of sources and can be exported from an increasing number of content creation tools. Since this is the first release containing USD support we’d be very interested in how customers are using it and what additional features you’d like to see. In a future release, likely RealityServer 6.2, we plan to add the ability to export USD files as well so that you can easily create content for your AR applications.

RealityServer 6.0 introduced the concept of Log Handlers and functionality in our C++ API to allow users to implement their own ILog_handler which would perform user defined actions when certain types of log messages were encountered. This uses the concept of tags which are attached to certain log messages. In particular messages related to GPU failures or other issues which while not fatal, cause significant performance degradation.

Previously there was no way to take action automatically if these problems were encountered since they were just messages in the log output. Some of our customers resorted to parsing the log output and searching for keywords. This approach is very brittle and would easily break if the wording of messages changed over time. The log handler functionality provided a much more robust way to handle this.

We shipped an example log handler in C++ source code form with RealityServer 6.0, however this was just an example and users who wanted to take advantage of the feature needed to be familiar with our C++ API. For RealityServer 6.1 we have added a new standard plugin called the Webhook Log Handler which you can use without any coding.

This log handler is configured in your realityserver.conf file and allows you to trigger a HTTP request when certain log messages are encountered. This request can be either a GET or a POST and you can use a simple templating language to construct the request using data from the log message such as the tags, category, severity, module, code and the message itself.

Some potential use cases include automatic Slack notifications when your server falls back to CPU mode for some reason, persistent logging of specific errors, like GPU errors to a HTTP logging service, triggering actions such as restarting your RealityServer process and much more. Basically anything you can hook up to a HTTP request is possible.

A new zip class has been added to the V8 server-side JavaScript API which let you extract the contents of a .zip file. You can extract the whole contents or provide a pattern to filter which files get extracted. This can be great for applications where the user uploads content to the server as a .zip file and you need to unpack it to be loaded by the server.

We’ll be exposing more ZIP related functionality in future releases however since extraction was such a common request from customers we wanted to include this one now.

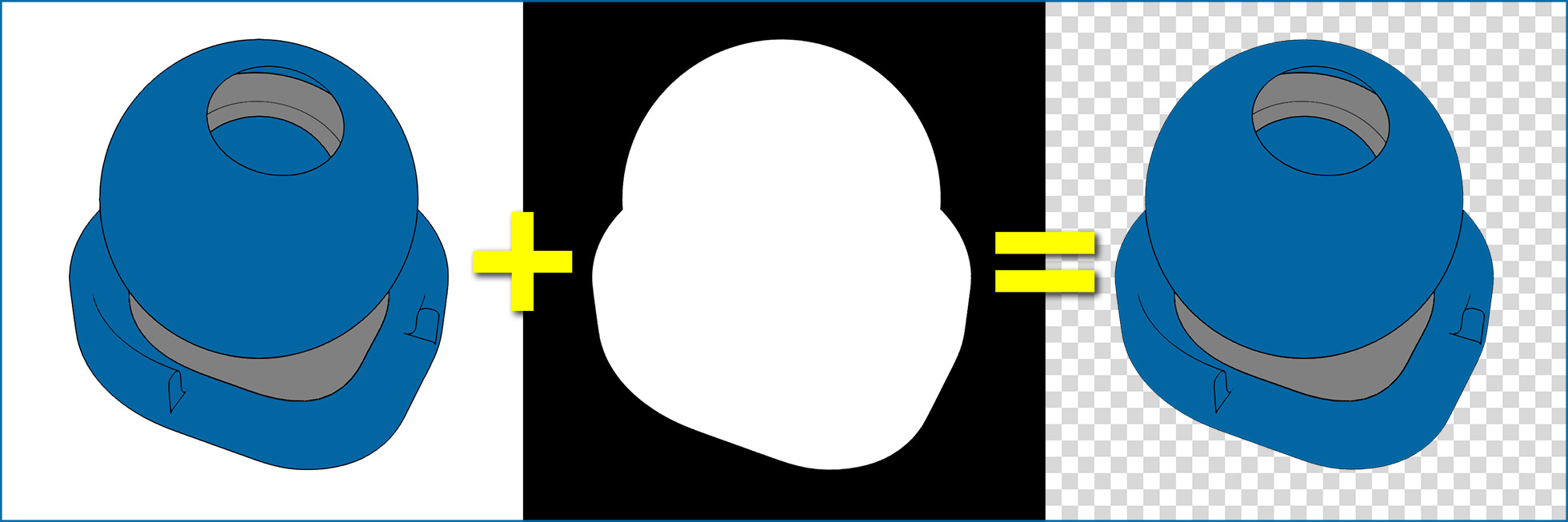

Some customers have reported use cases where they want to take channels from one canvas and put them into another in their V8 scripts. To help with that we have added a new combine_canvas_channels command which takes a set of mappings from source to destination canvas channels and returns a combined image.

As an example, you might want to render a toon post-processed canvas from the BSDF Weight canvas, however the BSDF Weight canvas doesn’t give you an alpha channel. With this command you can render the Alpha canvas separately and then combine it into the image from the BSDF Weight canvas to get a final toon processed image with an alpha channel.

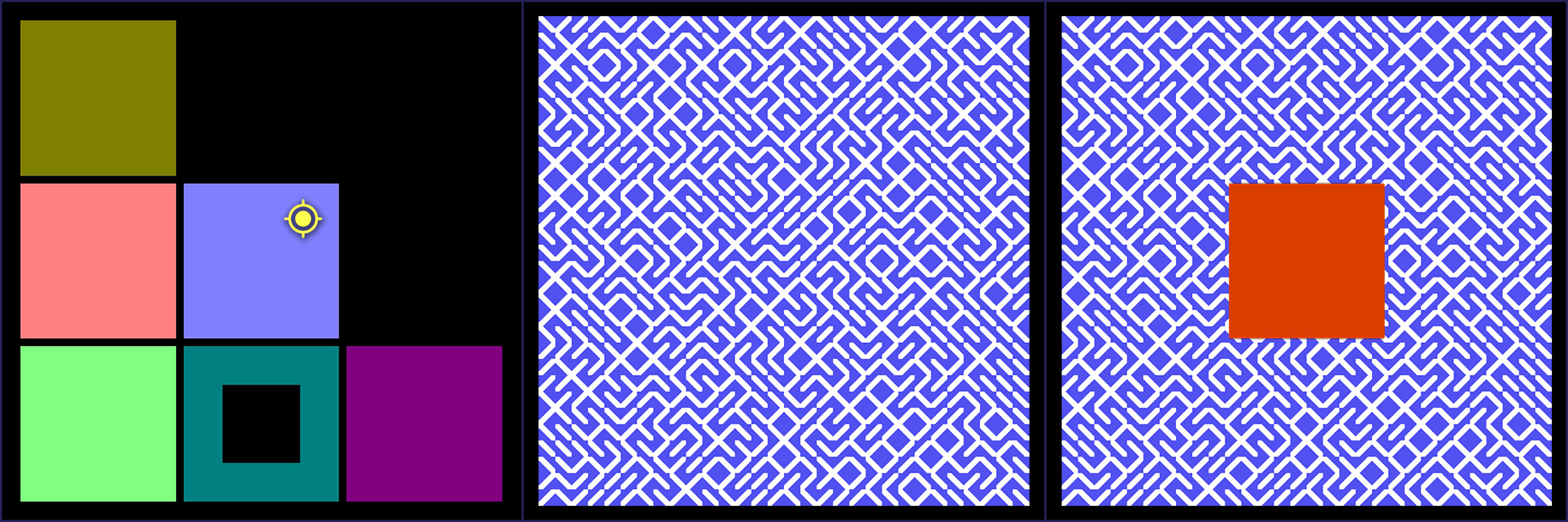

The Lightmap renderer in RealityServer allows you to generate irradiance data in the texture space of your geometry for pre-computed lighting. This is great for real-time environments like WebGL and game engines. Recently we’ve come across use cases where users would like other information about their model mapped into the texture space of the geometry, in particular position, normal and derivatives. This can be useful when you want to query this information in specific ways and as a side benefit it can be generated very quickly using OpenGL rather than a full render.

You can output the full original data or if you need to store the data in conventional textures we also have a variant which keeps the values in the 0 – 1 range for you. You can generate multiple canvas types in a single render call for multiple lightmaps. Please see the updated Lightmap renderer documentation in the RealityServer Features section on the Getting Started menu in the Document Center for full details of the new render context options and how to use them.

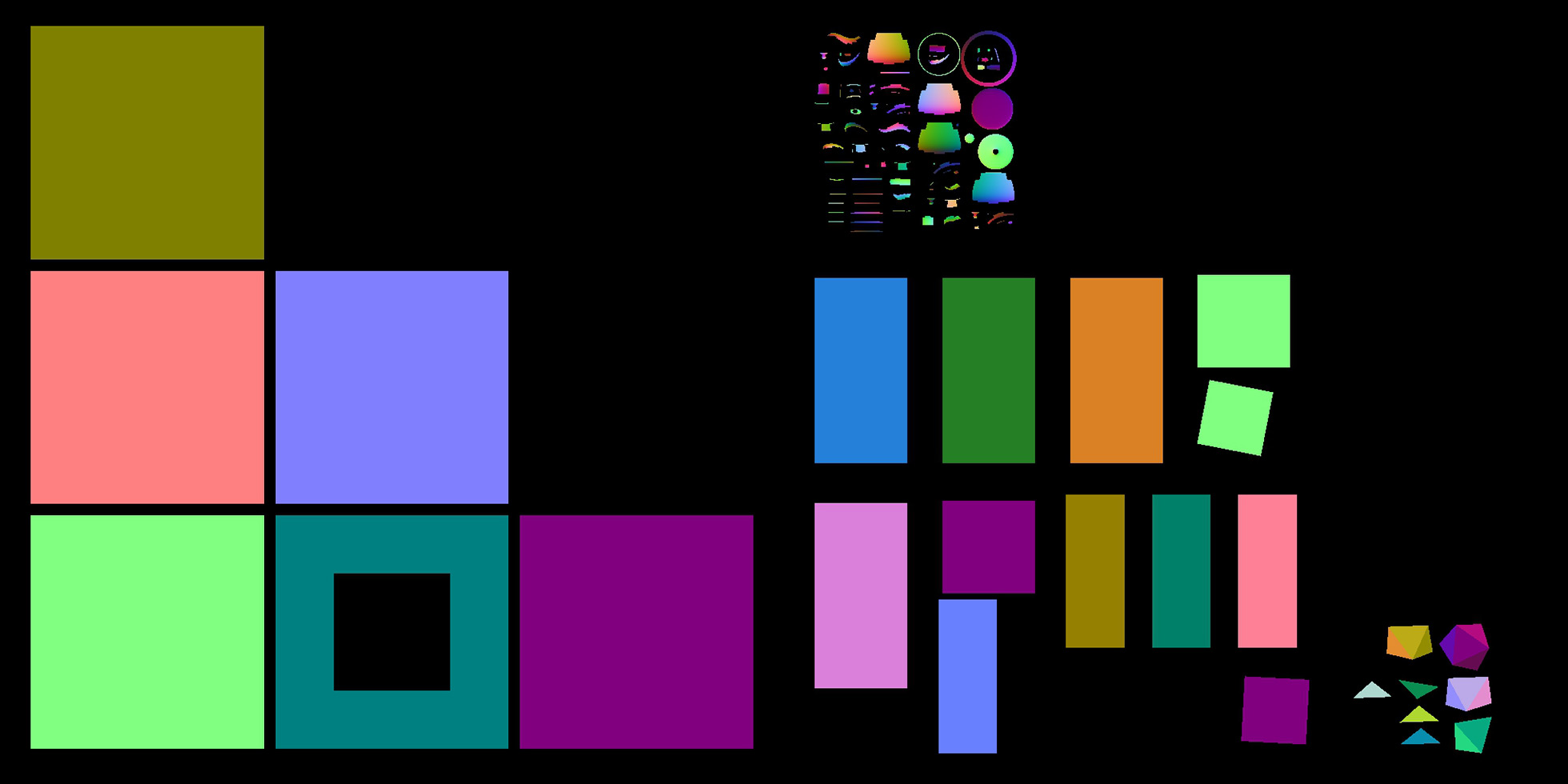

We’ve seen a few cases where customers where using external tools to perform editing of texture data to allow users to interactively draw into textures. This had definite performance issues so we have now added a specific command to handle one more common use case, flood filling regions of a texture.

The texture_fill_region command takes a source texture and a target texture a location and the data to fill. The source texture is used to determine which regions to fill. Ideally it should contain contiguous regions of a solid colour. The location is then used to pick the region to fill and the data provided is flood filled into the region in the target texture, overwriting what was there before. Think of it as a programmatic version of the bucket paint tool in Photoshop or Paint. There are several other options to control the tolerance used when determining the extent of a region, including a special method which can be used when the source texture contains normal data to base the tolerance on an angle.

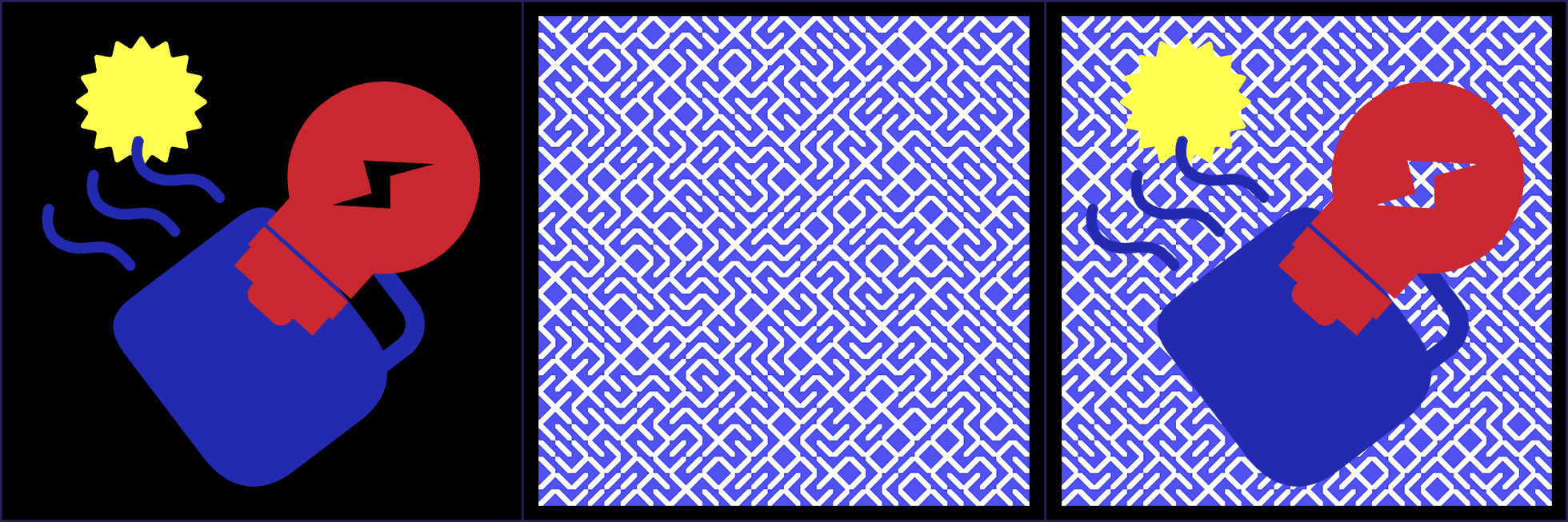

RealityServer has had a decals feature for quite some time as part of its Iray integration. However while this is extremely flexible and easy to control there are cases where you need decals to actually be baked into texture maps. For example if you want to export to a file format like glTF which does not support decals natively.

To facilitate this we have added the texture_blit_decals command which takes a source texture onto which the decals will be applied, a target texture which will contain the result and an array of decal textures with their associated positions within the image, rotation and scaling. The command uses OpenGL to rapidly overlay multiple decal images on the source texture and you can control how each decal overlaps through the order the decals are provided in the array.

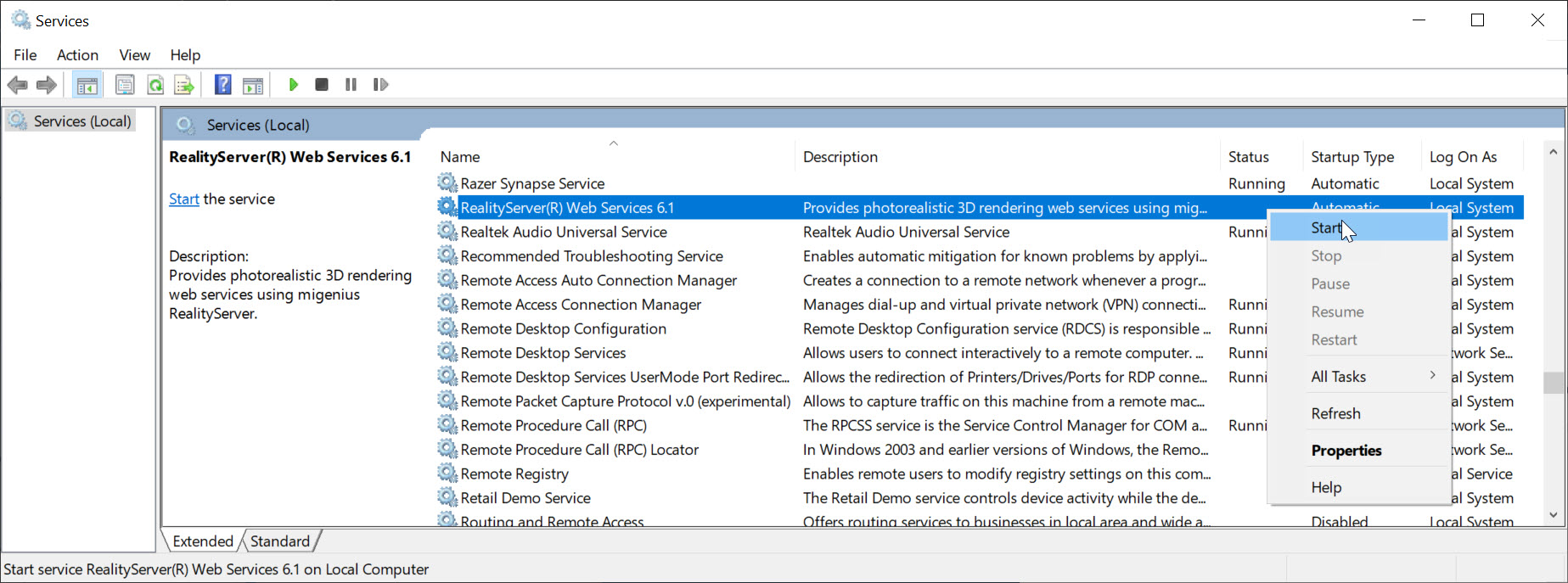

RealityServer now supports being installed as a Windows Service. In the Document Center go to Getting Started -> RealityServer Configuation -> Supported Command Line Options where you will find information on the command line options used to install RealityServer as a service. Please note that OpenGL features such as those used by the wireframe and lightmap renderers will not function when running as a service due to Windows limitations.

RealityServer 6.1 ships with Iray 2020.1.4 embedded. This release adds some significant new features and we have updated RealityServer to take advantage of them all.

RealityServer 6.0 introduced the SSIM predictor for determining how far from fully converged your image is. However if you wanted to actually use this information to terminate rendering, you had to extract the SSIM value and check it in your own code. With RealityServer 6.1 you can now simply set progressive_rendering_quality_ssim to true and rendering will automatically terminate when the SSIM value reaches the value set in the post_ssim_predict_target attribute.

Note that it is still not possible to use SSIM and AI Denoising together, so this feature is primarily intended for imagery rendered without denoising for now.

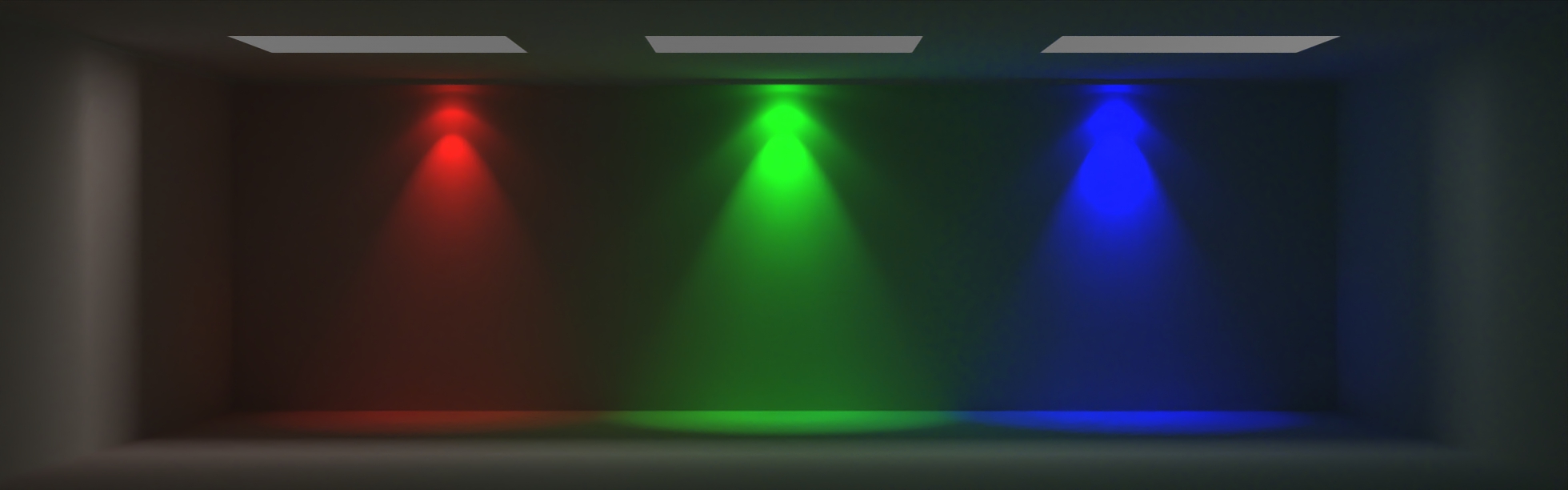

It is now possible to use scene attributes and custom vertex data within MDL materials. While the MDL has had the specification for scene data since version 1.6, this was not yet supported by Iray. You can now use the ::scene module in MDL which contains functions like the following:

You can think of these just like texture lookups, except they lookup scene attributes by name. The lookup functions take the name of the attribute as well as default value to use if the attribute isn’t found. The types above are just examples, please see the MDL specification for a full list of supported types. The types map to the RealityServer attribute types that you would expect them to (e.g., float3 maps to Float32<3>).

In addition to looking up data from attributes stored on scene elements, these functions can also lookup attribute data stored in the vertices of Triangle_mesh elements (more supported types are likely to come in the future). We have expanded the functionality of the geometry_generate_mesh and geometry_get_mesh commands as well as the Triangle_mesh V8 wrapper class to allow you to specify such per-vertex attribute data when using the binary mesh layout.

There are some great use cases for this feature and looking up attribute data can be used on both lights and geometry. So you could for example have a single complex light definition where the individual instances have different attributes on them to set the light brightness to have dimming scenarios without having to change the main MDL light material. You could also have a complex material where you just want to change the main colour on different instances, or assign random colours to instances.

Because making the rendering depend on user created scene attributes requires rendering to be updated when such attributes change, a new filtering mechanism has been added so that you can exclude attributes which don’t affect rendering from triggering scene updates. In RealityServer you can configure these filters either in your realityserver.conf file or using specialised commmands.

The new add_custom_attribute_filter, get_custom_attribute_filters and remove_custom_attribute_filter commands allow runtime control of the attribute filters. The new attribute_filter configuration directive can be used in your RealityServer configuration files to permanently add filters (which cannot be removed by calling commands) to your installation. RealityServer now ships with directives enabled to filter out the glTF and USD attributes by default.

Support for the Ampere architecture comes with this release. Unfortunately previous versions will not function on Ampere cards due to the architectural change. In our testing Ampere is giving around a 1.4x speedup over Volta when comparing similar cards (the Telsa V100 and the NVIDIA A100) and an even larger speedup of around 2.4x over Turing with similar generation card (the GeForce RTX 2080 Ti compared to the GeForce RTX 3090 for example). Here is a quick set of benchmarks with the new release.

The Deep Learning Denoiser is now fully migrated to use the OptiX denoiser built into the NVIDIA drivers. This comes with generally improved denoising results and the possibility for improvements added through driver updates rather than always requiring updates to Iray and RealityServer itself. As a nice side benefit, the size of the RealityServer distribution has shrunken significantly.

Support for the latest X-rite AxF format has been added including the new EP-SVBRDF based materials. There is also supported added for refracting clearcoats interacting with the underling SVBRDF.

RealityServer 6.2 is already well under development and some headline features coming in that release are a re-written glTF exporter (it was just the importer in this release) and USD export support. As always we’d love to hear what you want to see added to RealityServer so be sure to reach out to us.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.