NVIDIA RTX technology was announced late last year and has gathered a lot of coverage in the press. Many software vendors have been scrambling to implement support for it since then and there has been a lot of speculation about what is possible with RTX. Now that Iray RTX is finally about to be part of RealityServer we can talk about what RTX means for our customers and where it will be most beneficial for you.

Iray RTX speed-up is highly scene dependent but can be substantial. If your scene has low geometric complexity then you are likely to only see a small improvement. Larger scenes can see multiples of about a 2x speed-up while extremely complex scenes can even see a 3x speed-up.

RTX is both software and hardware. The key enabling innovation introduced with RTX hardware is a new type of accelerator unit within the GPU called an RT Core. These cores are dedicated purely to performing ray-tracing operations and can do so significantly faster than using traditional general purpose GPU compute. Performance will depend on how many RT Cores your card has. The Quadro RTX 6000 for example has 72 RT Cores.

Along side the new hardware, NVIDIA has introduced various APIs and SDKs which enable software developers to access these new RT Cores. For example, in the gaming world RTX hardware is accessed through the Microsoft Direct X Ray-tracing APIs (DXR). While production rendering tools such as Iray use OptiX.

Rendering software must be modified to take advantage of the new software APIs and SDKs in order to access the hardware. With RTX hardware and the latest RealityServer release, the portion of rendering work performed by Iray that involves ray intersection and computation of acceleration structures (see below) can be offloaded to the new RT Core hardware, greatly speeding up that part of the rendering computation.

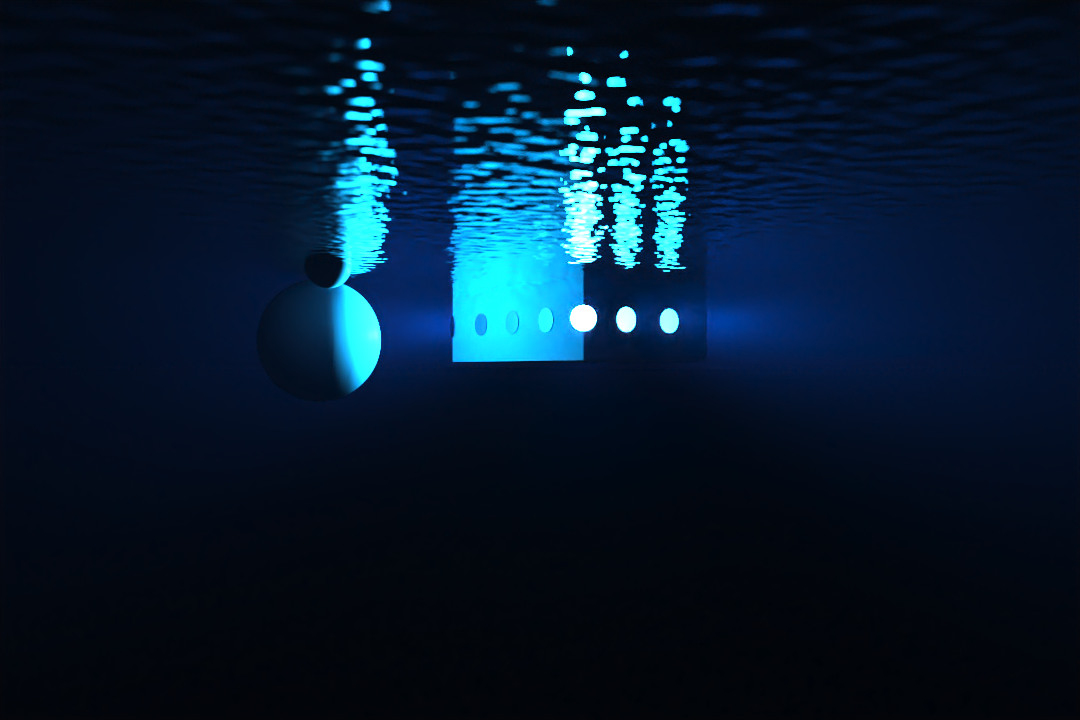

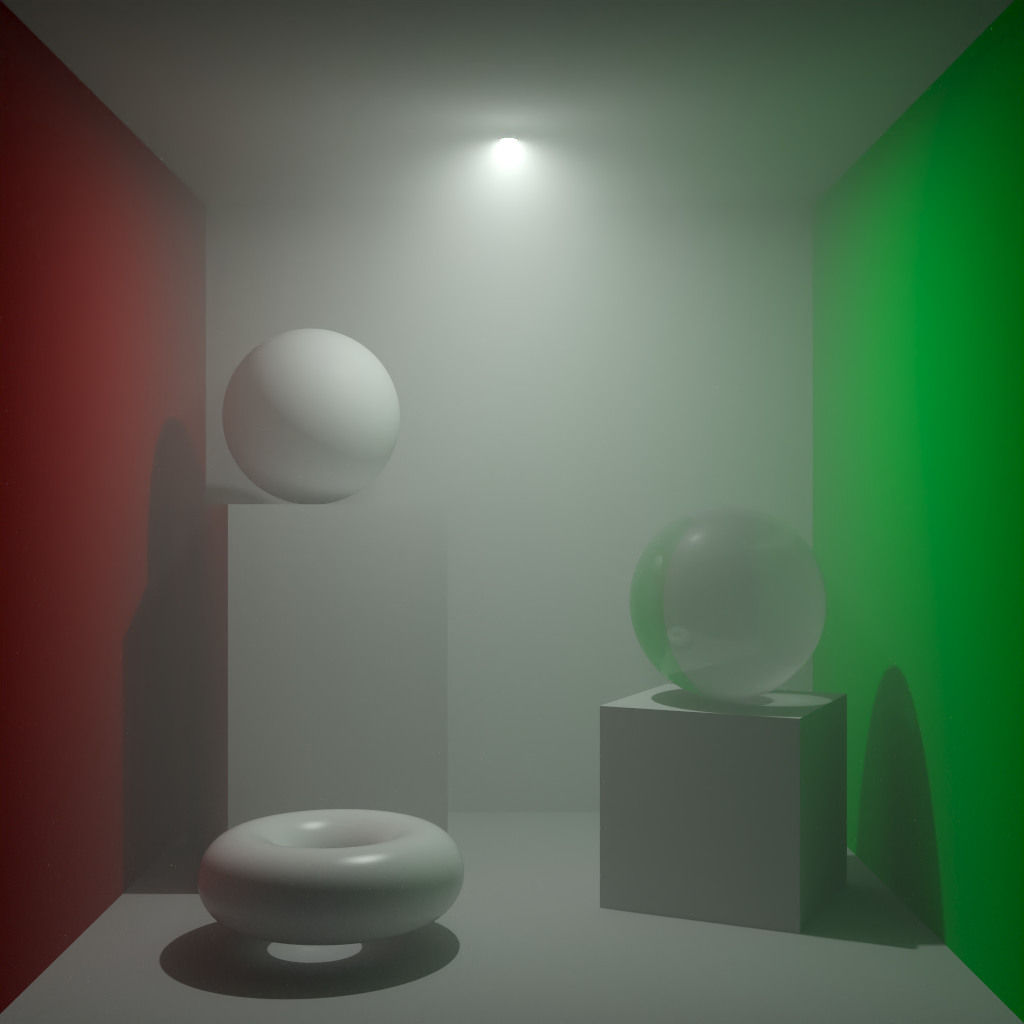

Ray intersection is the work of determining whether a ray (just think of it as a straight line) crosses through a given primitive (e.g., a triangle). We won’t cover exactly how path-tracers like Iray work but Disney have a great video Practical Guide to Path Tracing which gives you a good idea of the basics. You’ll quickly see that ray intersection is key to making this work.

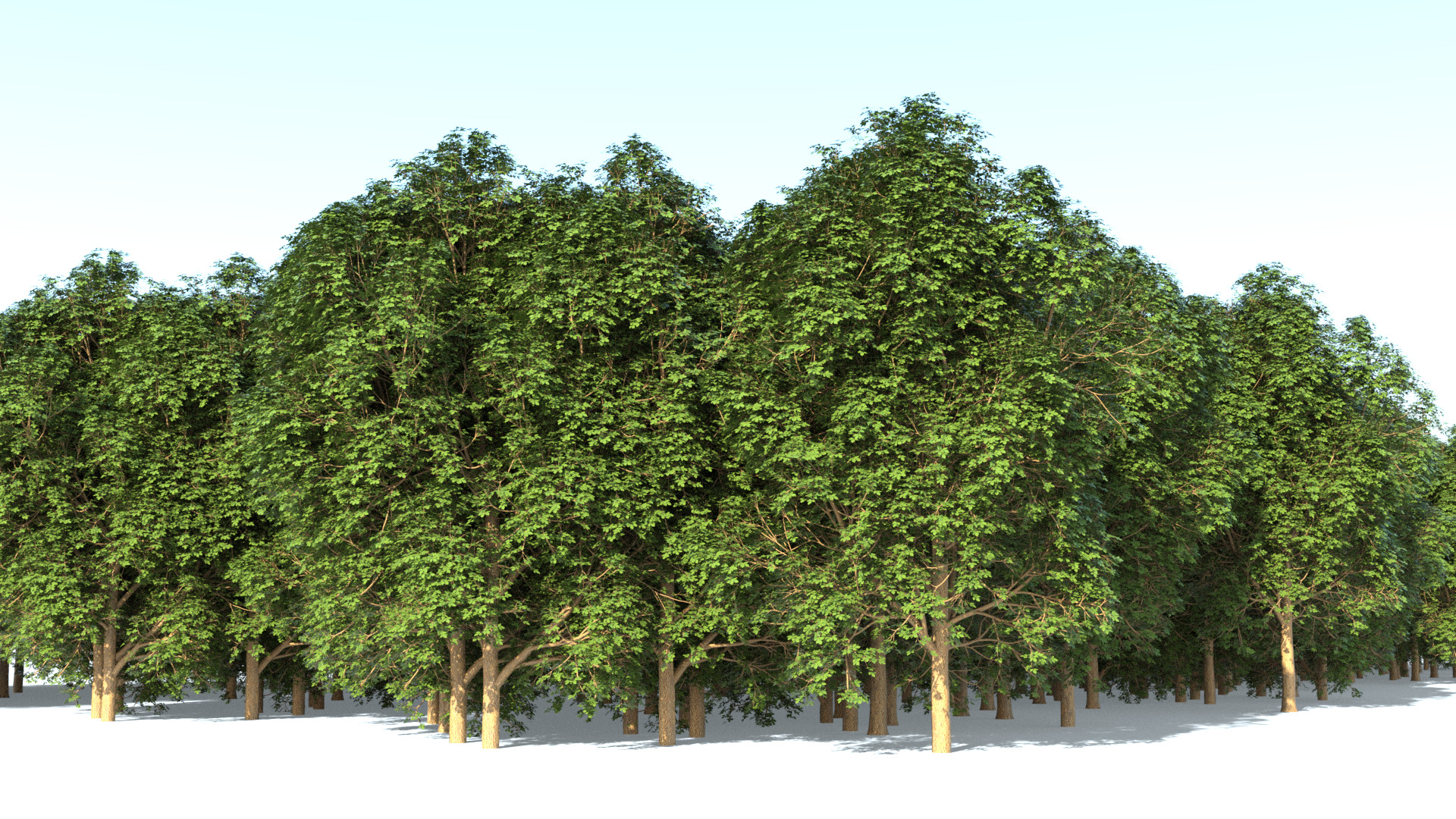

While the mathematics involved in checking if a ray intersects a primitive is relatively simple (at least for a triangle), scenes today can easily contain millions or even hundreds of millions of primitives. To make matters worse for typical scenes you also need to perform these checks for millions of rays. That’s millions of primitives times millions of rays, a whole lot of computation.

Naively checking for intersections with all primitives doesn’t cut it, you’d be waiting years for your images. To speed things up, when using ray-tracing, an acceleration structure is almost always also used. This uses some pre-computation to split the scene up into a hierarchy of primitives that can be tested rapidly to eliminate large numbers of those primitives from consideration quickly.

As a very simple example, imagine you have a scene with a million primitives to test distributed fairly evenly. If you cut the scene into two groups, you can first test whether a ray intersects with the volume of one of the groups and if it does not you can immediately exclude half of the primitives. By nesting structures like this you can progressively test until you reach the primitive that is intersected

While this is a massively over-simplified example and there is a lot of subtlety and nuance to implementing a highly optimised system for this, the basic principle remains the same. Devise a cheap test that can eliminate as many primitives from consideration as possible. RT Core hardware accelerates the query of acceleration structures and the ray intersection calculations making the whole process significantly faster.

It depends. Yes, everyone hates this answer but no way around it here. We’ve so far seen a typical speed-up range, for practical scenes, from 1.05x – 3.00x. That is a pretty wide range, so what determines how much faster it will be? We didn’t describe what ray intersection was above just for fun.

Notice that when we talked about ray intersection we never talk about materials, textures, lighting, global illumination, shadows or any of the other jargon commonly associated with photorealistic rendering. That is because for a renderer to do its job, it has to do much more than just ray intersections, even if it calls itself a ray-tracer.

All of the calculations needed for solving light transport, evaluating materials, looking up textures, calculating procedural functions and so on are still being performed on the traditional GPU compute hardware using CUDA (at least in the case of Iray). This portion of the rendering calculation is not being accelerated by RTX. So how much ray intersection is being done in a typical rendering with Iray for example?

In many scenes, we found that ray intersection comprises only 20% of the total work being performed by rendering. This is a very important point. Even if the new RT Cores were to make ray intersection infinitely fast so that it takes no time, 80% of the work still remains in that scene. So a 100 second render would still take 80 seconds with RTX acceleration, giving a speed-up of 1.25x. Of course, ray intersection is not free with RTX, just faster, so the speed-up would be lower than this but this is the hypothetical upper limit.

If you have a scene where 60% of the work is ray intersection you will naturally see a much more significant speed-up. In that case on a 100 second render, with an infinitely fast ray intersector you still have 40 seconds of rendering, giving a speed-up of 2.5x at the hypothetical upper limit. In general we have found RTX provides the greatest benefit in very complex scenes with millions of triangles and also scenes that heavily exploit instancing.

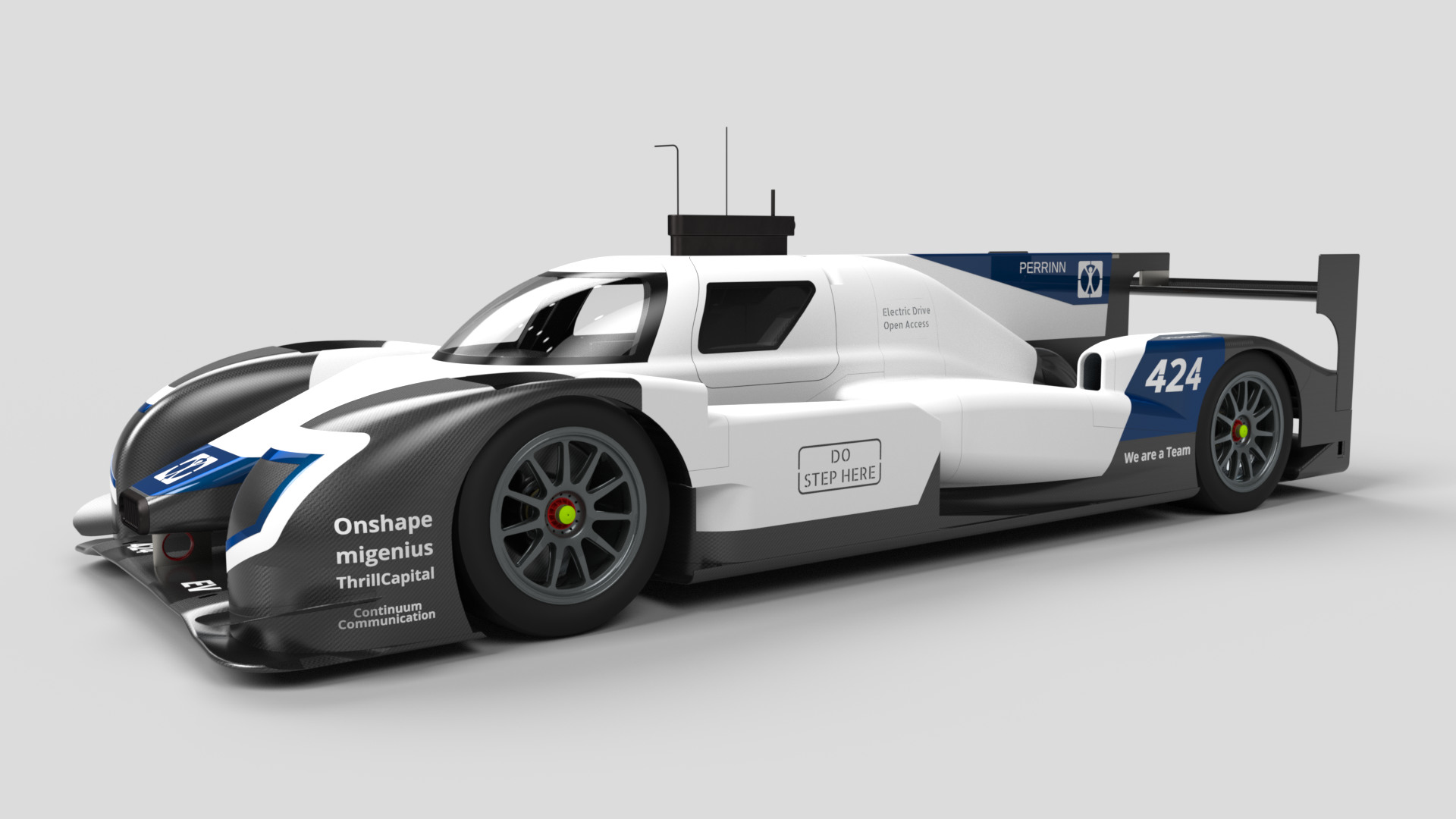

We took 14 scenes we had available and tested them on a Quadro RTX 6000 card with Iray 2018.1.3 and Iray RTX 2019.1.0 to evaluate the speed-up.

Above you can see we’ve also included an estimate of the percentage of the rendering time that is associated with ray tracing where available. This gives a clear picture of how this directly affects how much speed-up you get by using RTX hardware. It is also clear that the more complex scenes benefit a lot more since they spend more time doing ray intersection.

Unfortunately the strong scene dependence of RTX performance means there is no single number you can give to describe the performance advantage when integrated into a full renderer like Iray. Any way you cut it, you’ll definitely get a performance boost from RTX, exactly how much will depend on your scenes.

One bonus not considered here is that the inference part of the AI Denoiser in Iray can be accelerated by the Tensor Core units on the RTX cards, much the same way as was seen on Volta based hardware. This can be quite useful on larger image sizes when using the denoiser. There is also a more general performance bump that comes with the new version of Iray that is unrelated to RTX and a significant speed up in Iray Interactive mode for real-time use cases.

The NVIDIA Tesla T4 card which is increasingly used in the data-center and becoming available at various cloud providers actually also contains RT Core hardware (40 RT Cores) even though it doesn’t have the RTX branding. This isn’t emphasised in marketing material so is easy to miss.

For many of our customers, availability of hardware at popular cloud providers is important since they are often not deploying on their own hardware. As of writing both Google Compute Engine now has the Tesla T4 in general availability while Amazon Web Services have Tesla T4 based instances in beta and should be in general availability soon.

We get a lot of questions from customers on whether they should be looking at RTX hardware for RealityServer. It certainly gives more options to consider and now it is important to think about your content as well when making a purchasing decision. If you deal with highly complex scenes, there is little doubt that RTX is worthwhile and the price points of RTX hardware, compared to say Volta based hardware make them very compelling even if they don’t quite reach the performance of Volta on smaller scenes. When comparing to Pascal or Maxwell based cards, RTX cards are a pretty clear winner in price/performance and they walk all over older Kepler based cards.

The best way to make a decision is to benchmark a representative scene or scenes from your own content rather than our generic benchmark tests. Our benchmarks will give you a good feeling for the difference between cards as a baseline, but you need to test your own data to determine how much additional benefit you will get on RTX hardware. If you’re a RealityServer customer or considering purchasing RealityServer and have scene data we can help you with these tests, contact us to learn more.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.