19 September, 2018, London — Project 424 welcomes its first brand technology partners to the team as the development of the world’s first all-electric and autonomous Le Mans Prototype race car enters an exciting new phase of development.

The trio of new partners, Onshape, SimScale and migenius, will form an integral part of Project 424’s overall development through the provision of high-performance, cloud-based tools for the design, simulation and 3D prototype renderings of this unique Le Mans Prototype race car.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

RealityServer 5.1 Update 251 has just been released. It’s mainly a bugfix release but adds a few nice extras that several customers have asked about, including a PBR MDL material, Iray Viewer loader and commands for manipulating measured BSDF data.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

RealityServer 5.1 Update 227 has just been released and it has some great new features. A new Iray version with improvements to the AI Denoiser, an easy to use compositing system and a bunch of new convenience commands. This post gives an overview of the new functionality, however the compositing features are so significant that we are currently writing a dedicated article for that feature which will be out soon.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

Come and meet us at NVIDIA’s annual GPU Technology Conference in San Jose 26-29th March, 2018. NVIDIA’s theme this year is “AI & Deep Learning” and we’ll be on the ‘Iray Plugins’ booth #826, where you’ll be able to see examples of Iray images rendered with the latest AI De-Noising technology. AI now accelerates de-noising of Iray images by a factor of up to 10x.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

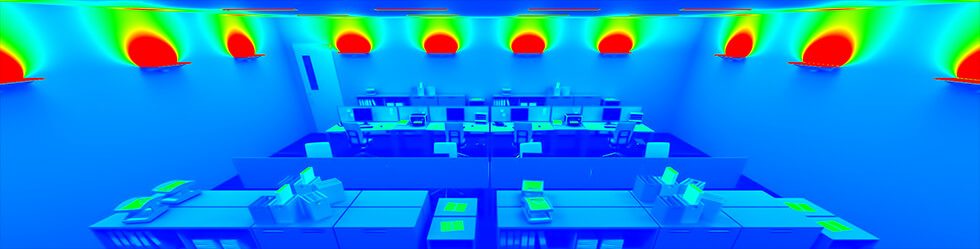

Bloom Unit, our physically accurate, photorealistic renderer for SketchUp has just won another award. The LIT Lighting Design Awards selected Bloom Unit for an award in the Innovative Lighting Design Software Applications category, making this the third lighting industry accolade to be handed out for a RealityServer based product. Behind the scenes Bloom Unit uses RealityServer as its core rendering engine and much of its functionality is inherited from this platform. It’s great to see recognition of the difference physical accuracy makes in the usefulness of rendering for this industry.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

We recently released RealityServer 5.1 build 2017.173. This is mainly an incremental and bug fix release but adds some cool features some customers have been waiting for, including multiple UV sets, materials and holes for the generate_mesh command, updated AssImp plugin, a new Smart Batch command and render loop improvements.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

RealityServer 5.1 introduced new functionality for working with canvases in V8. In this post I’m going to show you how to do some basic things like resizing and accessing individual canvas pixels. We’ll build a fun little command to render a scene and process the result into a piece of interactive ASCII art. Of course, this doesn’t have much practical utility but it’s a great way to learn about this new feature!

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

RealityServer 5.1 is here and it has something a lot of users have been asking about. This release adds the new AI Denoising algorithm for fast and high quality denoising of your images using state of the art machine learning technology. You really need to try it to fully appreciate the performance benefits however we’ll show you a few images to give you a feeling for what it is capable of. We are also adding support for the new NVIDIA Volta architecture and as usual a range of other smaller enhancements.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

Starting November 20th 2017 migenius has taken over the support and development of the Iray for Rhino plugin. We have been big fans of Rhino for a long time and look forward to expanding the plugin’s features going forward, including increased compatibility with RealityServer and integration of the latest Iray features. We have released an updated version of the plugin for Rhino 5.0 with Iray 2017.1.1, including the incredible new AI denoising functionality. You can download a free 30 day trial and purchase Iray for Rhino from irayplugins.com.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

RealityServer for Onshape is now live! Following successful beta, migenius is pleased to announce that RealityServer rendering for Onshape is now available as a publicly available service. Visit the RealityServer rendering page in the Onshape appstore to subscribe and start rendering fast, photorealistic images of your Onshape models immediately.

The first two hours every month are free…

2 free hours per month are included in your subscription and you can purchase additional rendering hours as you need them. Your rendering runs on a dedicated server with a high end NVIDIA GPU and to show you how straightforward the service is to use we have created this short movie to get you started.

Happy rendering!

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

We are happy to announce the immediate availability of RealityServer 5.0. There are some great new features so we’ve put together a quick list of the headline items. We will also be posting additional articles on the individual features and how to use them but for now take a look at what’s new.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

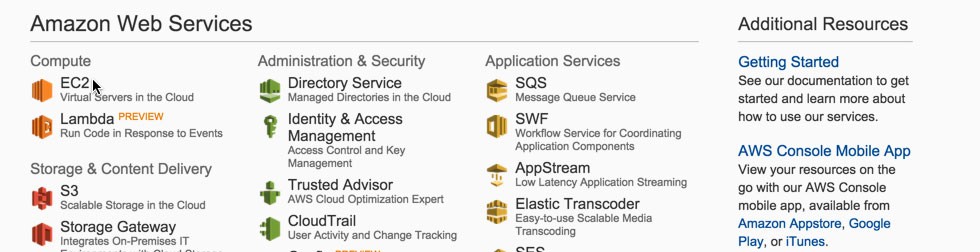

A lot of new customers ask us where they can run RealityServer since they don’t have their own server or workstation with NVIDIA GPU hardware available. Starting up RealityServer on Nimbix is covered in another article where everything is pre-configured for you, on AWS however you need to do a bit more setup yourself. We are assuming here that you are already familiar with Amazon Web Services and starting instances on Amazon EC2, along with basic concepts like security groups. We won’t cover the basics of how to start an instance here however there is lots of good information about that online, including this guide from Amazon. So, let’s get started.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

Watched by almost 1,000 people at the UK lighting industry’s Lux Awards in London, migenius’ Bloom Unit rendering plugin for SketchUp won the “Enabling Technology of the Year” award ahead of a shortlist comprising some much bigger and perhaps better known names (see the full list here).

The panel of 17 judges drawn from all over the industry cited Bloom Unit as product that was a ‘step change’ in lighting design – by ‘leveraging the power of cloud computing’ migenius had delivered a product that allows renders ‘of awesome scope and accuracy’.

A feeling for just how prestigious this event is can be gained from the official movie, with migenius and their UK partner for the lighting industry, onlight, picking up their award around the two minute mark.

We are certainly proud that Bloom Unit has been recognised by the UK lighting industry. Proof indeed of just how important the RealityServer technology that underpins it is for businesses wishing to differentiate the user experience for the products and services they are bringing to market.

If you want to checkout Bloom Unit for yourself, sign up for a free 14 day trial on the Bloom Unit website.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

We recently released RealityServer 4.4 build 1527.93. This update included Iray 2016.2 and some interesting new features. While still an incremental update, the big item many of our customers have been asking for is finally here, NVIDIA Pascal architecture support. So your Tesla P100, Quadro P6000, Quadro P5000, GeForce GTX TITAN X, GeForce GTX 1080, 1070 and other Pascal cards will now work with RealityServer. Keep reading for some more details of the new features in update 93 of RealityServer.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

The number of cloud service providers offering NVIDIA GPU resources is increasing and in today’s article we will show you how to get started using RealityServer with Nimbix. migenius has deployed several of its customer projects on the Nimbix platform and it offers some unique advantages such as containerised environments (instead of virtualisation), fast start-up times and usage charged by the minute instead of by the hour. On Nimbix migenius has set-up a pre-configured RealityServer environment for you, keep reading to learn how to sign up for Nimbix services and get RealityServer up and running. Read More

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

In this, the second part of our article on transformations I will introduce SRT (Scaling, Rotation, Translation) transformations. Unlike the previous article, this one will have a lot less maths and shows you a simpler way to work with transformations in RealityServer. Additionally the method allows for automatic interpolation of transformations over time in a smooth way which is great for creating animations. Once things are moving you can also introduce motion blur for more realistic results. Read on to discover the ease of SRT transformations.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

Transformations are fundamental to working with 3D scenes and something that can be frequently confusing to those that haven’t worked in 3D before. In this, the first of two articles I will show you how to encode 3D transformations as a single 4×4 matrix which you can then pass into the appropriate RealityServer command to position, orient and scale objects in your scene. In a second part I will dive into a newer method of specifying transformations in RealityServer called SRT transformations which also allows for the easy animation of objects.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

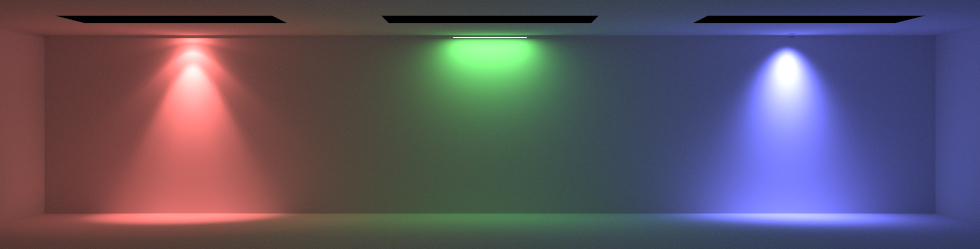

In this article I am going to show you how add light sources to your RealityServer scene using the Web-services API. You will learn how to add several different types of lights, including a photometric light using an IES data file, an area light, a spot light and daylight. This will be a very simple example but will give you all of the pieces you need to programmatically add lighting to your scene. You can expand on the concepts shown here to make different types of lighting very easily.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

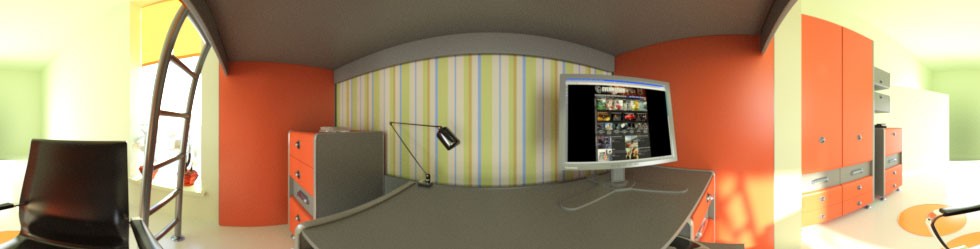

RealityServer 4.4 build 1527.46 has just been released adding Iray 2016.1.1 which includes support for rendering stereo, spherical VR imagery suitable for viewing with devices such as the Oculus Rift, HTC Vive, Samsung GearVR, OSVR and Google Cardboard viewers. There are also numerous small additions and bug fixes and some other new features such as spectral rendering, however VR rendering is the headline item. In this article we will show you how to do simple VR rendering with RealityServer.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

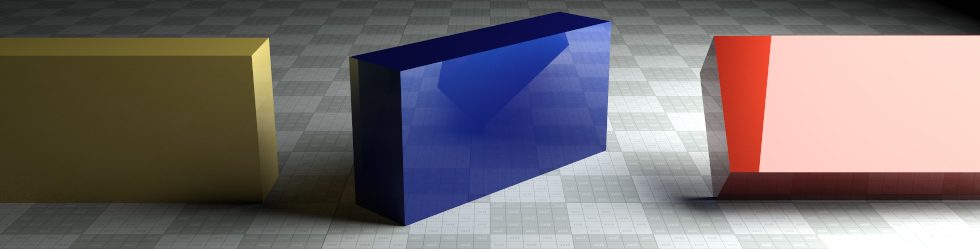

In this article I am going to show you how to create a simple 3D scene, completely from scratch using RealityServer. You will learn about the anatomy of a RealityServer scene and the different components that go into making it up, including options, groups, instances, cameras, geometry and environment lighting. While the scene will be very simple there will be many key principles of RealityServer and NVIDIA Iray demonstrated which you can expand on to build more complex scenes.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

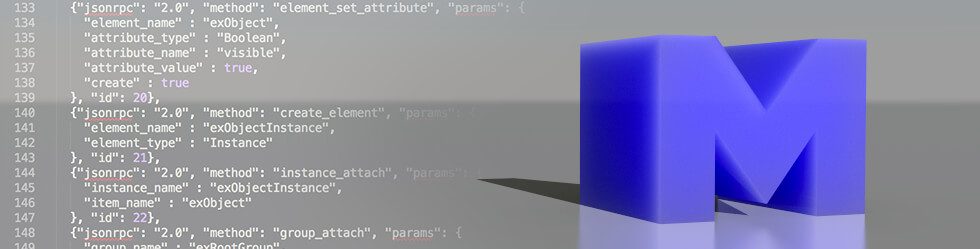

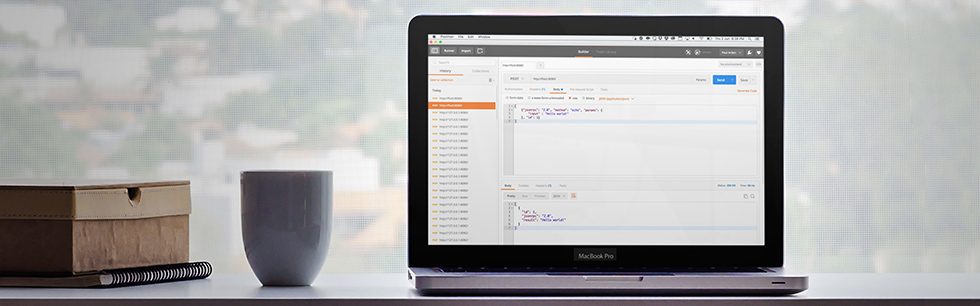

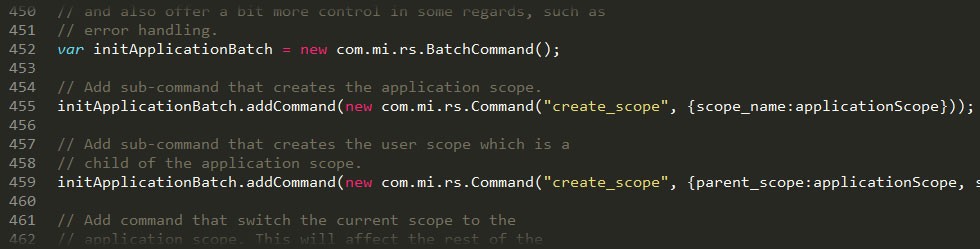

When getting started with RealityServer, many customers ask us the best place to begin in order to learn how RealityServer works. One of the best and most enjoyable ways we find is to explore the JSON-RPC API which remains the main way that RealityServer functionality is accessed. In this article we will provide an overview of how the RealityServer JSON-RPC API works and some of the best ways to explore and play with functionality exposed there. Whether you are new to RealityServer or a veteran user you will find some valuable pointers.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

Today we released RealityServer 4.4 build 1527.40. This incremental update focuses on features to help make development with RealityServer easier. It includes many elements which enable RealityServer to do more out of the box without having to write your own plugins. When new customers get their first look at RealityServer we often get many of the same questions about how to do certain things. We hope with this release and future releases to start covering many of these with off the shelf functionality. Most of the information here is also contained in the RealityServer release notes and documentation but if you don’t have RealityServer yet you can read about some of these new features below.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

We have just released RealityServer 4.4 which includes the new NVIDIA Iray 2016.0. We will periodically release updated versions as new improvements and Iray updates become available. We’ll cover some of the highlights of this release here but users are strongly encouraged to read both the RealityServer release notes (relnotes.txt) and the Iray releases notes (neurayrelnotes.pdf) provided with the release. Let’s take a look at those new features, some of which many of our users have been asking about for some time.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

migenius is pleased to announced we are one of the launch partners for the Onshape App Store which has just entered private beta. We are making RealityServer available as an integrated application within Onshape running entirely in the cloud. Check out our dedicated RealityServer for Onshape product page for further details. Our integration with Onshape is still in beta at the moment however it is already very usable. You can request early access to the Onshape App Store private beta from this link. If you want to know more please contact us.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.

So you have obtained RealityServer and installed your license server, what now? We frequently get questions about the best place to start learning about RealityServer and how to use it. As RealityServer is a large, very generalised platform it can be difficult to know where to start. This article provides some pointers on where to start and the best way to learn the basics.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.