This is a big one! We’ve been in beta for a while so some of our advanced users have already had a chance to check out the great new features in RealityServer 6.0. Some headline items are a new fibers primitive, matte fog, toon post-processing, sheen BSDF, V8 engine version bump, V8 command debugging and much more. Checkout the full article for details.

RealityServer 6.0 includes Iray 2020.0.2 build 327300.6313 which contains a lot of cool new functionality. Let’s take a look at a few of the biggest.

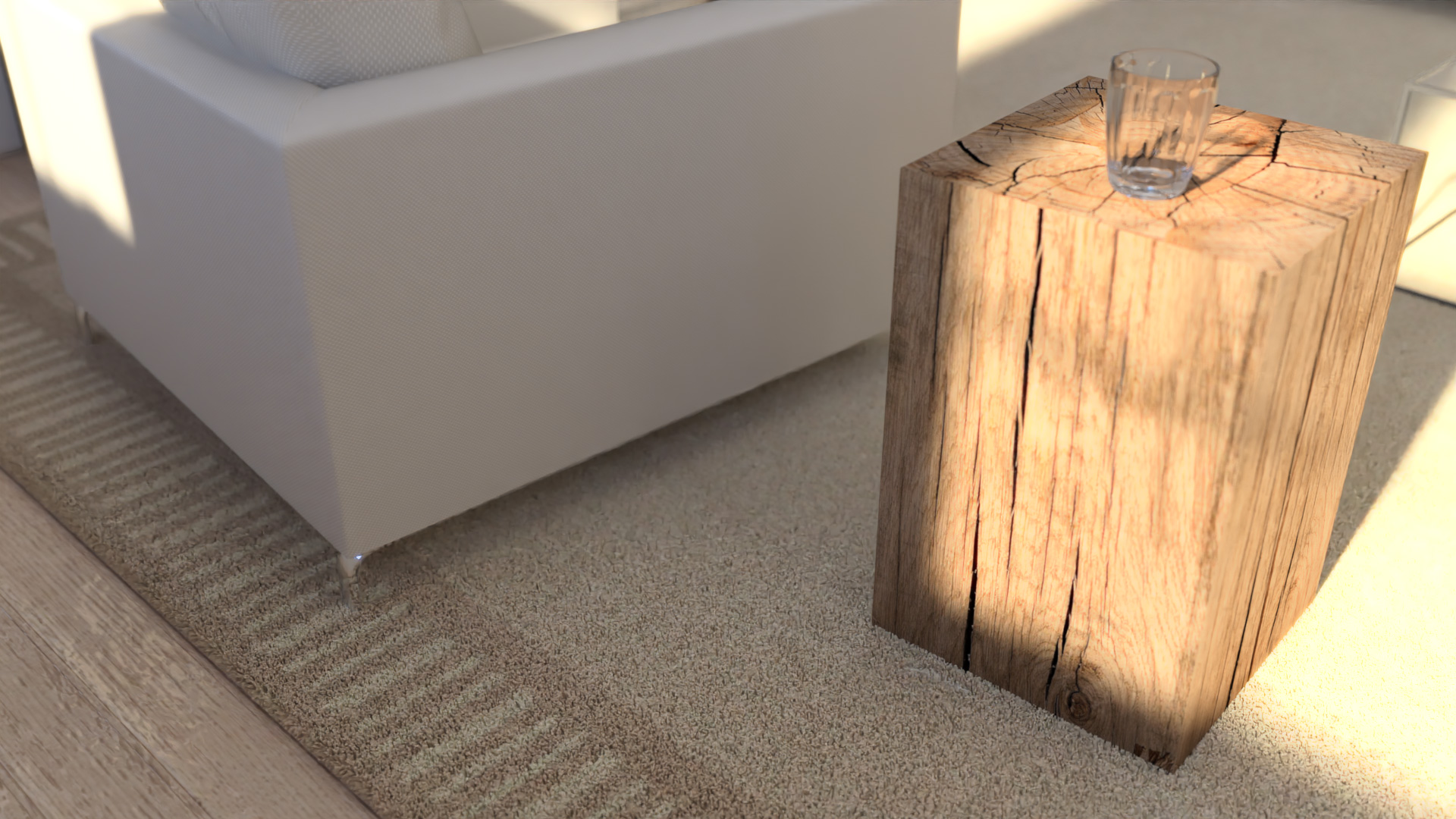

In many products this might be called hair rendering, however fibers as implemented by Iray can be used for almost any fiber like object, for example hair, grass, carpets, fabric fringes and more.

Fibers are a new type of element you can create and consist of a set of points defining a uniform cubic B-spline and radii at those points. This forms a kind of smooth extruded cylinder with varing thickness. This lightweight primitive is intersected during ray-tracing directly, without creating any explicit polygons or mesh geometry. This allows very large numbers of primitives to be handled.

Think millions. Many places you’d like to use fibers will need lots of them, so this efficiency is essential. In testing we have also seen that RTX based GPUs with RT Core hardware ray-tracing support see even bigger speedups on scenes with a lot of fiber geometry compared to regular scenes. Creating fibers can be tricky since there isn’t really much software which authors this type of geometry. Tools such as the XGen feature of Autodesk Maya would be one example. However many of our customers just want to generate a somewhat random distribution of fibers over existing mesh geometry.

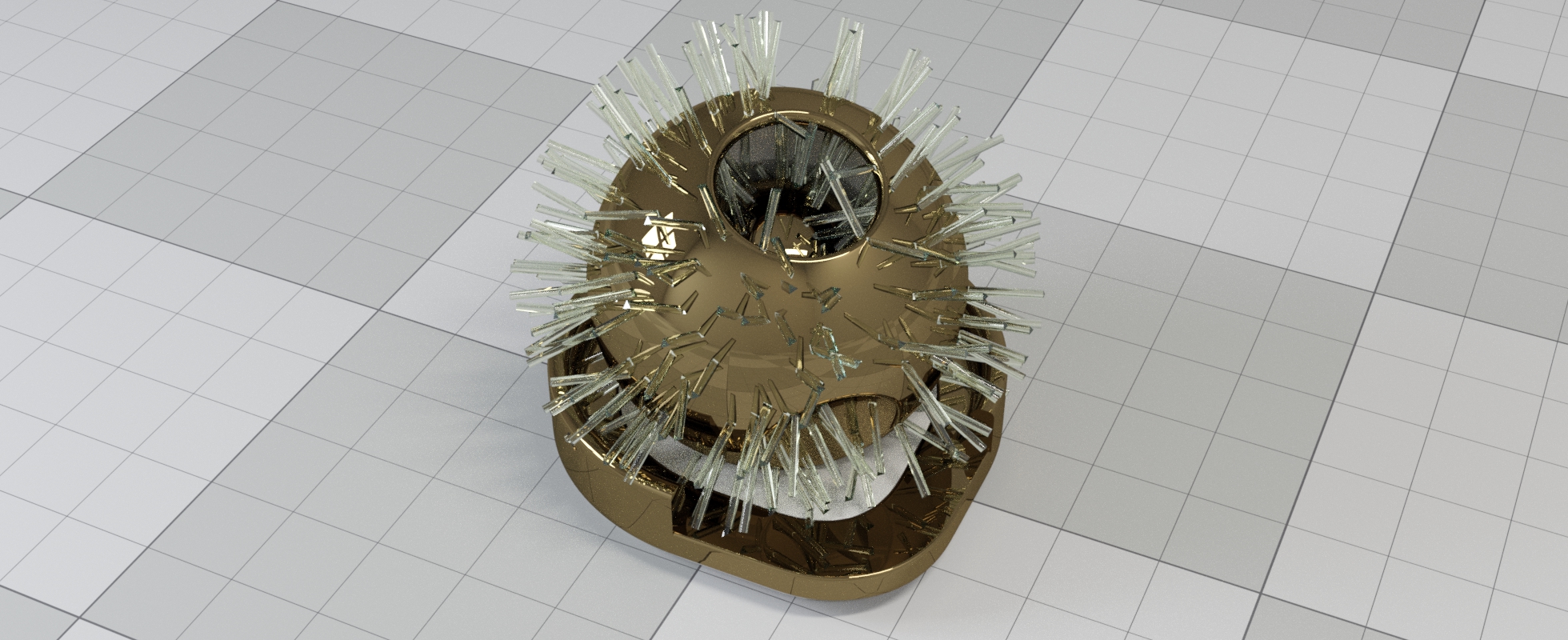

To make that simple we now have a new generate_fibers_on_mesh command which takes a mesh and some simple parameters and generates fibers for you. The topiary example above was generated this way by passing the geometry of the big 6 into the command. If you want to directly control every aspect of your fibers we also have the generate_fibers command which allows you to provide a JSON based or binary based description of the fibers geometry you want to create. We’ve also included V8 wrapper classes for fibers similar to those used for Polygon_mesh and Triangle_mesh. This is the best way to make use of the binary data input.

Fibers can also be read from .mi files using the hair object supported there from the mental ray days. As various Iray plugins such as Iray for Maya start to support fibers they will be able to export this data from those tools into a .mi file that can be read by RealityServer. Of course you can also create fibers from C++ plugins so if you want to use your own custom fibers format you can implement that as well. To finish up on fibers here is another great image, this time with 30M fibers in the scene and varying the fiber colour using textures.

When rendering larger scenes, the effects of aerial perspective can be critically important to getting a realistic result. You probably know this most from seeing the shift towards blue in features as you look out to the horizon over a landscape. In theory you could simulate this already in previous releases by enclosing the entire scene in a huge box and applying a material with appropriate volume properties to the box however this would significantly increase rendering time. Now with the new matte fog feature there is a much faster way.

In the image above you can see the original scene without matte fog applied on the left and on the right with matte fog enabled. You can move the slider to compare the two results. The matte fog image gives a much more realistic impression of this type of scene. Until you see it with the fog it can be hard to put your finger on what is actually wrong with the original image. Enabling matte fog is easy, you just need to enable a few attributes on your scene options, see the Iray documentation for details.

The matte fog technique is applied as a post effect, similar to the bloom feature that was introduced some time ago. It uses depth information combined with the rendering result to apply a distance based fog. Because it runs as a GPU post process it adds very little time to rendering. Unlike a true volumetric simulation (which as mentioned earlier, is still possible), matte fog will not produce effects from specific light sources or so called god rays. For these effects you will still need to perform a full volume simulation, however to get a simple aerial perspective effect this feature is perfect.

Another new post processing effect being introduced in this version is toon post processing. This allows you to produce non-photorealistic rendering results using RealityServer such as might be used for cartoons, illustrations or diagrams. The toon post processing effect can be applied to the normal result canvas, ambient occlusion canvas or the BSDF weight canvas (also introduced in this version). It affects both the shading and also adds outlines to the objects. Here is a quick example of what you can achieve.

This image was made by applying the toon effect to the BSDF weight canvas which encodes the albedo of the materials. It then uses a faux lighting effect to give shading which is quantized to give the banded appearance typical for cartoons. You can control the colour of the outlines, the level of quantization or also choose to show the fully faux shaded appearance or no shading at all. Object IDs are used to determine where the edges of the objects are located, so in cases where you have objects with the same material that needs to show edges you should ensure they have unique object ids assigned with the label attribute. You can also set the label attribute to 0 on an object if you want to selectively disable the outlining. Also note that the toon feature works best when progressive_aux_canvas is enabled on your scene options.

To support all of these features the old canvas_content parameters of our RealityServer render commands have been changed to now accept an object in addition to a string. This object is needed since canvas content type can now contain various parameters. For using the toon effects you need to use a V8 command or named canvases so that you can use the render_to_canvases command for this purpose. It’s definitely simplest to use in V8, here is a quick example command which renders a toon image similar to the above of one of our default scenes.

const Scene = require('Scene');

const Camera = require('Camera');

module.exports.command = {

name: 'render_toon',

description: 'Render a scene with the toon effect.',

groups: ['javascript', 'examples'],

execute: function() {

let scene = Scene.import('test', 'scenes/meyemII.mi');

scene.options.attributes.set(

'progressive_rendering_max_samples', 10, 'Sint32');

let camera = scene.camera_instance.item.as('Camera');

let canvases = RS.render_to_canvases({

canvases: [

{

name: 'weight',

pixel_type: 'Rgba',

content: {

type: 'bsdf_weight',

params: {}

}

},

{

name: 'toon',

pixel_type: 'Rgba',

content: {

type: 'post_toon',

params: {

index: 0,

scale: 4,

edge_color: {

r: 1.0, g: 1.0, b: 0.0

}

}

}

}

],

canvas_resolution_x: camera.resolution_x,

canvas_resolution_y: camera.resolution_y,

renderer: 'iray',

render_context_options: {

scheduler_mode: {

type: 'String',

value: 'batch'

}

},

scene_name: scene.name

});

return new Binary(

canvases[1].encode('jpg', 'Rgb', '90'),'image/jpeg');

}

};

The main difference to how you would have done this before is the fact that the in the canvas definitions passed to the render_to_canvases command the content property is specified as an object. You can see that in the second canvas that is being rendered there are three extra parameters. This example renders two canvases, the first is the BSDF weight and the second is the post toon effect which uses the first canvas to do its work. We then encode and return the second canvas. Changing the scale parameter of the toon effect gives some interesting results. In the example below you can see the difference between setting this to 0 and 4.

Please refer to the Iray documentation for more details on how to use the toon effect feature and what the different parameters do. While RealityServer rendering commands still accept the older canvas_content string definitions, we definitely recommend updating your applications if you are rendering anything other than the default canvas_content (result) to use the new method of specifying canvas contents to ensure future compatibility.

Iray 2020 comes with support for MDL 1.6 which adds several new features. One of the major ones is a new BSDF specifically for dealing with sheen. This phenomenon is particularly important for realistic fabrics and in the past other rendering engines would often use non-physical effects such as falloff to fake this. Now with the new sheen_bsdf you have a physically based option for proper sheen. Here is an example how how big a difference sheen can make.

In this scene the image on the left is using pure diffuse fabrics while on the right we have added sheen. The effect is subtle and difficult to quantify but the sheen is often what results in a much more fabric like appearance. To help you try out this functionality we’ve included a new add_sheen MDL material in a new migenius core_definitions MDL module. You’ll find this at mdl::migenius::core_definitions::add_sheen and it allows you to add a sheen layer on top of any existing material.

The Structured Similarity Index or SSIM for short, helps determine the similarity between an image and a given reference image. How can this help us when rendering since we don’t have a reference image? In fact that is exactly what we are trying to create by rendering in the first place. Iray 2020 uses deep-learning techniques to allow predict SSIM values based on an imaginary converged image using training data from a large number of pairs of partially and fully converged images generated with Iray. How does knowing the SSIM value actually help us here?

The image above is a heatmap generated by the SSIM predictor in Iray and it shows which parts of the image have converged and which still require further work. The brigther areas are closer to the imagined reference image while the darker areas are further from it in terms of similarity. Using this information it is possible to predict at which iteration Iray will reach a particular target similarity and how long it will take to get there. If you’ve dealt with having to build heuristics for your application to set the iteration count or nebulous quality parameters in Iray, especially for widely varying scenes then you can probably see where there is going and why you would want it.

In the end the goal will be for you to be able to set a quality value which is your SSIM target value and have this control when rendering terminates as well as provide feedback to users on how long rendering is expected to take. Right now however this feature should be considered more of a preview into type of automated termination conditions that are coming in future releases. There are a few important restrictions for now which mean you may not be able to use it in your application.

So if you want to use the denoiser or work with very large output resolutions it might not be the right solution for you. Right now you would also need to implement the logic for using SSIM to set termination conditions yourself since at the moment it only reports information to you, it does not take any actual action. In RealityServer you can see the information coming back from the SSIM predictor by calling the render_get_progress command with the area parameter set to estimated_convergence_at_sample or estimated_convergence_in to get the iteration at which the target SSIM value will be reached and the amount of time to get there respectively. In theory your application could start rendering just enough to get the estimates and then use these to set the termination attributes and report the estimated time.

If you would like to obtain an image like the one shown above to get some insight into what the SSIM predictor is seeing you can render out the convergence_heatmap canvas type, settings its index parameter to the index of the canvas you want to diagnose. This works in the same way as the toon post processing canvas setup described above. There are various Iray attributes to control the predictor, post_ssim_available to allow it to be used, post_ssim_enabled to turn it on and post_ssim_predict_target to set the target value between 0 and 1 which is basically the quality. A value of 0.99 would usually yield a production quality image and 0.98 would be suitable for medium quality results. There is also a post_ssim_max_memory option to control memory usage but unless you really need to change this you should let Iray choose the memory to use. All of these options are set on the Options element for your scene.

Look for further improvements to the SSIM system in future releases. If you try it out in RealityServer 6.0 we’d love to hear more about your experiences with this feature.

You might have noticed, particularly when rendering with very low iteration counts that the shadows on the virtual ground plane in Iray Interactive can have a blotchy appearance, even when using the AI Denoiser. This is because by default there is a filter applied to these shadows to smooth them out, however this was implemented before the AI Denoiser existed. Unfortunately the filter destroys the denoisers ability to detect the noise on the ground plane and remove it. There is now a new boolean option irt_ground_shadow_filter which if disabled (it is enabled by default to preserve the current behaviour) will turn off this filter and let the denoiser operate on the ground plane.

In the image above you can see the results with the default settings were the filter is applied on the left. Note the blotchiness in the ground shadow while the noise has been removed from the rest of the image. On the right you see the effect of turning off the filtering so that the denoiser can work on the ground as well. Note that both of these images are just 4 iterations with Iray Interactive and the AI denoiser enabled. Of course neither gives a perfect result and at much higher sample counts the difference is much less obvious, however many of our customers are creating applications where they are targeting very low rendering times and making heavy use of denoising and low iteration counts. For those cases this can help a lot with ground shadows.

As with most Iray releases you will also find a lot of bug fixes and smaller improvements incorporated, here’s a few relevant ones but be sure to read the neurayrelnotes.pdf file for all the changes.

The AI Denoiser now gives significantly better results for some problematic scenes that were reported by users. While the vast majority of scenes gave good results already, there were a few specific scenes with certain types of features (in particular thin features over other elements) which could cause artifacts. This has been improved a lot in this release.

While the hair BSDF of MDL 1.5 had actually been in Iray in the previous release, it wasn’t actually of much use since there was no hair primitive to use it with, that of course has now changed but in general the update to MDL 1.6 brings with it all of the changes present in MDL 1.6 as well. If you are writing your own MDL content some of these may be important, for example changes to how the modules are handled (particularly the removal of weak relative module references). A new multiscattering_tint parameter has also been added to all glossy BSDFs to address energy conservation in certain situations. To get a full list of the changes look to the MDL 1.6 specification (you can find it in the RealityServer Document Center) and scroll to the last page where you will see a list of all changes.

There is a new iray_rt_low_memory option for users that are operating in significantly memory constrained environments, this allows the acceleration structures for ray-tracing to consume less memory. This is only relevant for pre-Turing GPUs at the moment but can be useful for people using older, lower-end cards.

Obviously the update to Iray 2020 brings a lot of new functionality, however we didn’t stop there. There are plenty of RealityServer specific updates as well and while these might not have as many pretty pictures to go with them, we think developers will find them particularly exciting.

While an update to the V8 engine might not seem too significant, it is actually a pretty big jump and adds a lot of core JavaScript language features. Previously we were on V8 5.6, so if you are a JavaScript guru you know this is a big deal. Google have put a lot of focus into the performance of V8 and there have been significant improvements in this area, meaning your custom V8 commands will run faster. If you’ve been waiting for some of the great new language features in JavaScript such as nullish coalescing, optional chaining and more then you finally have your chance to use them. Also features like the padStart and padEnd methods for strings can come in handy, particularly when making element names. If you’re really brave you can try out WebAssembly support (WASM), yes, even though we have a native C++ API already, you might find reasons to do this or maybe your just adventurous!

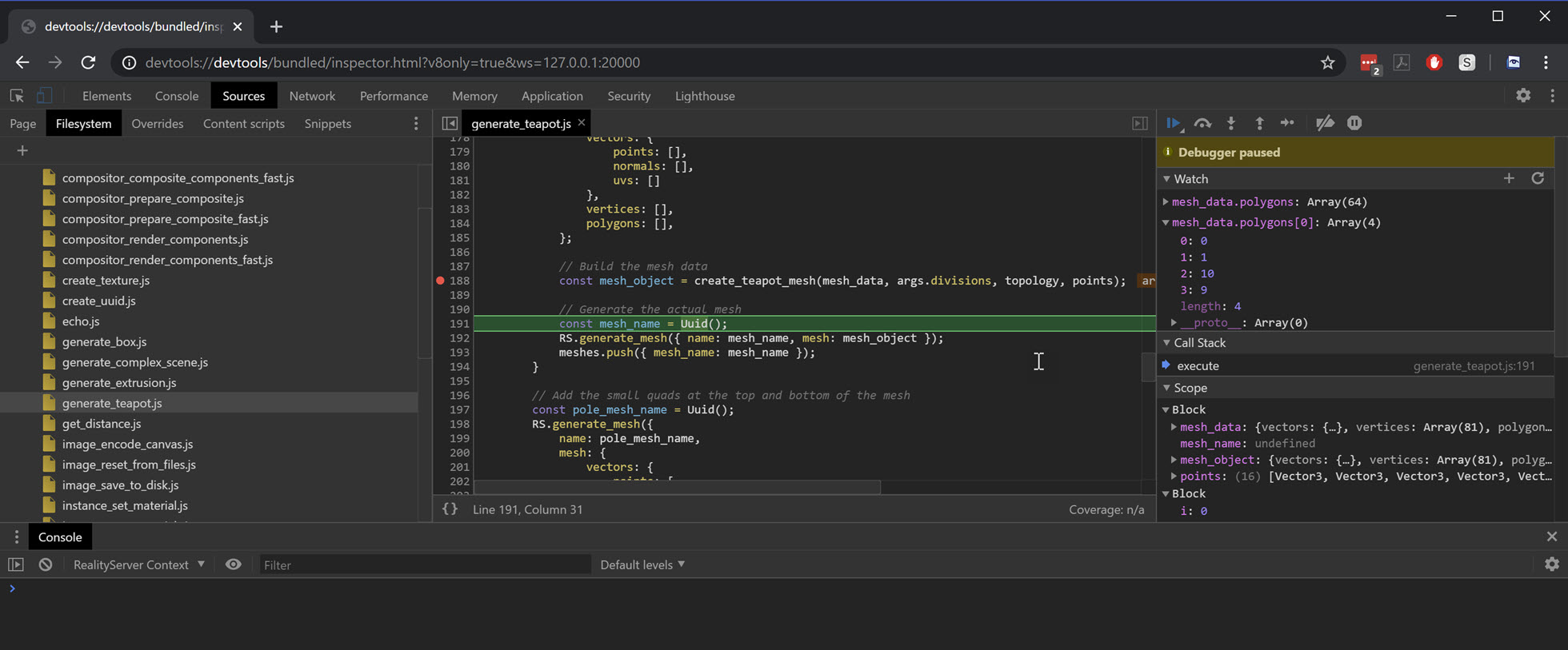

Ok, for anyone that spends a lot of time writing custom V8 JavaScript commands with RealityServer this is huge. In RealityServer 6.0 you can debug your V8 commands using the Chrome DevTools. This means you can set breakpoints, inspect values, step through your code, all in a familiar environment. It’s really a game changer for productivity, no more console.log debugging your commands! It’s simple to enable, just checkout your realityserver.conf file and in your V8 configuration you’ll see the following line commented out, just un-comment it to enable the inspector.

<user v8>

...

inspector 20000

...

</user>

When you run RealityServer now it will listen on port 20000 (or whatever you selected) for inspector connection. In Chrome you then just need to go to your address bar and drop in a link like this.

devtools://devtools/bundled/inspector.html?v8only=true&ws=127.0.0.1:20000Of course you can replace 127.0.0.1 with whatever you like if your server is remote and the port as well. Now a word of warning, do not enable this feature on production servers. In order to debug V8 commands, RealityServer switches to a single threaded model so only a single command can be in flight at a time. There are obviously also security implications to leaving this port open, so make sure this is not enabled outside debugging. When you navigate to the inspector you’ll be greeted with an interface similar to this one.

You can see a few things going on here, a breakpoint was set and hit and we’ve stepped on one line from that and are watching a couple of variables. You can add your V8 directories to the sources explorer to find your commands and any console output is now redirected to the console in the inspector so you can see it there.

You’ve actually always been able to use Promises in V8 commands however they probably didn’t work quite the way you would expect, in fact we had never intended to support them, it was more an accident of them being in V8 itself. In RealityServer 6.0 we’ve now made Async and Promise support more useful and also included async versions of methods on some of our built in classes like fs and http. This means your commands execute function can now be async in a useful way. The command itself will not return until all Promises are resolved or rejected, so keep in mind this is not intended for kicking off long running jobs and walking away. With this new functionality you can now do something like this.

module.exports.command = {

...

execute: async function(args) {

let data = await fs.async.readFile(args.filename, { encoding: 'utf8' });

return JSON.parse(data);

}

};

Both the fs and http classes now have an async module within them which exposes async versions of the regular synchronous versions. You can combine these with language features such as Promise.all() and friends. If a Promise is rejected then the command with throw an error. In cases where you want to have specific behaviour you need to wrap your calls in a try catch block.

module.exports.command = {

...

execute: async function(args) {

try {

let data = await fs.async.readFile(args.filename, { encoding: 'utf8' });

return JSON.parse(data);

} catch(error) {

return({});

}

}

};

There actually are not that many native JavaScript features that make use of Promises, with the notable exception of the WebAssembly API. Without using Promises you can only load trivially small WebAssembly programs, the API for loading larger programs requires the use of Promises. For example here is a very simple command which loads a .wasm file, compiles and executes it and returns the result.

module.exports.command = {

...

execute: async function() {

const wasm = await fs.async.readFile('main.wasm');

const module = await WebAssembly.compile(wasm.buffer);

const instance = await WebAssembly.instantiate(module);

const result = instance.exports.main();

return result;

}

};

WebAssembly is a whole topic in itself of course and we won’t cover it here, however there are some pretty interesting possibilities. You can of course also just make your own Promises if you would like them for some reason.

module.exports.command = {

...

execute: async function(args) {

let result = await new Promise((resolve, reject) => {

if (args.input === 'resolve') {

resolve('I resolved');

} else {

reject('I rejected');

}

});

}

};

Note that like using built in functionality, in the case above if the reject path is taken the command itself with throw an error. So you need to use a try catch block where as well if you want different behaviour. We’ll likely need to make more use of async functionality in the future as JavaScript expands and more built in features use it, so this new feature lays some of the ground work for that. We also added one more related feature, there is now a global sleep() function you can call. You might wonder where you would want this however it can be very helpful when you want to implement some kind of retry logic with exponential back-off for example. The sleep function returns a Promise which resolves to undefined when the specified time is reached.

module.exports.command = {

...

execute: async function(args) {

await sleep(5000);

return 'Waited 5s!';

}

};

There are still some restrictions on this functionality in RealityServer 6.0. Right now it is not possible to call another V8 command after an await expression or in the then, catch or finally handlers of a Promise. This restriction will be removed in a future release.

When building V8 commands you often want to share settings between your various commands and allow users to configure these. In the past the only real way to do this was to explicitly load a JSON file or another JavaScript file which contained this information. However this means mixing configuration and logic in the same place which is often undesirable. It is now possible to access the RealityServer configuration information from within your V8 commands. Here is a quick example.

const config = RS.Configuration.user.find( config => config.value === 'migenius');

const my_feature = config.subitem.find( config => config.name === 'my_feature');

if (my_feature === 'on') {

// Do something

}

You’ll see here there is a new RS.Configuration object and this holds all of the information parsed from the RealityServer configuration files (by default realityserver.conf and whatever you might include). In the above example we are first retrieving a user configuration directive called migenius and the an item from within it called my_feature. Many of the built-in directives can be accessed directly on RS.Configuration and this maps very closely to the internal mi::rswservices::IConfiguration class in our C++ API. The easiest way to get a feeling for what is available is to just dump RS.Configuration as JSON and take a look through it.

Within V8 you can now crop any Canvas and get back the cropped version and optionally convert the pixel type during the cropping operation. We had a few users who were resorting to external tools for this or writing the cropping code in pure JavaScript which was quite a bit slower so we thought we would add native support.

let canvas = image.canvas;

// Arguments are xl, yl, xh, yh being the left, bottom, right and top edges of the crop

let cropped = canvas.crop(10, 15, 50, 35);

// Optionally convert pixel type while cropping

let cropped = canvas.crop(10, 15, 50, 35, 'Rgba');

It has been a long standing issue with Iray and in turn RealityServer that when you encounter GPU failures such as a GPU fault, memory being exhausted or other issues causing the GPU to stop working, the only place you find out about this is the log output. This means you had no way to programmatically handle GPU errors or other fatal issues that are encountered. Part of what makes this so difficult to deal with is that Iray has a strict no-exceptions policy (for performance reasons) which means it cannot throw exceptions that could be caught by other code.

To handle this without exceptions Iray 2020 introduces a new concept of log entry tagging and message details. Log entries now come with a Message_details structure which tells you whether the message was associated with a specific GPU device and also a Message_tag which gives details on what actually happened. The idea is that these are able to be acted on programmatically, so there is no need to try and parse text out of logs or other brittle measures that would break with even minor Iray changes.

In RealityServer 6.0 you can exploit this new functionality by implementing an mi::rswservices::ILog_handler in a C++ plugin. We ship a small example in the src/log_handler directory of your RealityServer installation showing how this can be done. If you implement a custom log handler you can programmatically inspect all log details and look for specific tags which identify GPU problems or other activity you are interested in and then take action programmatically. This finally provides a mechanism by which you can react to GPU errors.

For quite a while the RealityServer Client Library has been the recommended means of using RealityServer when building browser based applications. However all of the example applications in RealityServer were still using the legacy client library. RealityServer 6.0 updates all sample applications to use the new, modern JavaScript client library. We’ve also removed some other example which really didn’t represent best practice use of RealityServer.

UAC (User Access Control) and automatic session creation are incredibly useful features for multi-user RealityServer applications. Unfortunately in previous versions while you could use our new WebSocket based client libraries, you still had to send traditional requests in order to keep sessions alive as UAC was not aware of what was happening in a WebSocket stream. Now UAC is aware of WebSocket streams and having an active stream will automatically keep the session alive for you.

Pretty must every customer who uses RealityServer also installs the so called “Extras” plugins we provide which added commands such as camera_frame, camera_auto_exposure, set_sun_position and more as well as plugins to help with setting content disposition headers for image saving and other handy functionality. These are all now finally included as core features so there is no need to install extra plugins when setting up RealityServer. So if you’re wondering where the extras plugin download has gone, it is no longer needed.

We’ve included the V8 command render_batch_irt for some time and it basically just renders using Iray Interactive in a loop to simulate batch rendering as enabled in Iray Photoreal by using the batch scheduler render context option. While Iray Interactive also recognises the batch scheduler mode, it annoyingly handles it differently, instead of running to termination in one step it returns after each step and needs to be called multiple times until it reaches the termination conditions. Our V8 command worked around this but had some annoying consequences, in particular switching between renderers meant changing the command you called instead of just one parameter. With RealityServer 6.0, if you enable the batch scheduler when using Iray Interactive mode it now behaves the same way as Iray Photoreal and renders to completion, even when using the standard render command. If you use the render_batch_irt command it will still work and it uses the new functionality (which is also faster), however we recommend switching to just use regular render commands now.

With the new functionality to allow Iray Interactive batch rendering, lightmapping with Iray Interactive is now possible as well. While this was technically possible in earlier versions it would only ever render the first iteration which wouldn’t be of much value. Now you can use Iray Interactive for light mapping just like Iray Photoreal. Another feature we added to the lightmap renderer is control over the amount of padding which is added to the lightmapping elements within the result texture. This is desirable if you want to implement your own inpainting solution or apply an external denoiser to lightmaps (since we don’t support AI denoising of lightmaps in RealityServer).

On the left you can see the lightmap image with no padding applied and on the right with 5 padding passes (the default value). The padding process basically extends the edges of the lightmap regions using the boundary pixels and is intended to avoid edge arifacts that can appear when using the textures and the filtering causes the black areas to intrude on the area being textured. As such it is still important that if you render without the padding in order to apply your own post processing that you do something similar to your images afterwards to avoid artifacts.

If you’re a l33t RealityServer developer then you know about the Admin Console page served up on port 8081 by default (configurable of course). This has in the past only been a direct exposure of the internal Iray admin page, however in RealityServer 6.0 we have started to extend it with new RealityServer specific pages. Now you can see a list of the active WebSocket streams and also the active render loops that are running. This can be great when diagnosing various issues.

Since we had the logic already for the generate_fibers_on_mesh command, we decided to create an additional more generic command called generate_points_on_mesh as well. This takes a Triangle_mesh or Polygon_mesh object and gives you back a list of random points distributed on the surface along with normals at those points, also potentially randomised to some degree. This can be great for scattering objects in scenes, for example if you want to randomly place trees over a terrain. Here’s a quick test using the generate_points_on_mesh command to create transforms for a bunch of cylinders created with the generate_cylinder command.

Using the distill_material command it was previously indirectly possible to bake the results of an MDL function call using the baking functionality of the distiller, however this unfortunately required making a placeholder material and attaching the function to it in a way that you knew the distiller would not change it (for example attaching it to the emission). There is now an mdl_bake_function_call command which allows you to pass any MDL function call into the baker and use just the baking functionality without distilling anything.

There are quite a lot of different potential uses for this. For example you might want to bake a very complex graph of connected functions into a simple texture image or you might want to write custom MDL functions which perform image manipulation you want to have run on the GPU during baking. This command basically provides a way for you to execute your MDL function over the full UV texture domain and output the results to a static image.

The command also lets you get the results in a wide variety of ways, you can have it return the encoded image data, base64 encode it, return a Canvas or create scene elements such as Texture or Image for you.

We see customers often want to do two types of operations on canvases they are producing, multiplying them for blending or adding them for Light Path Expression compositing. This could already be done with V8 commands and reasonably fast but in some cases not fast enough. To help with that we have added a new combine_canvases command. This takes two canvases and an operation (currently only multiply or add but we are considering adding others in the future) and runs the operation and returns a new canvas.

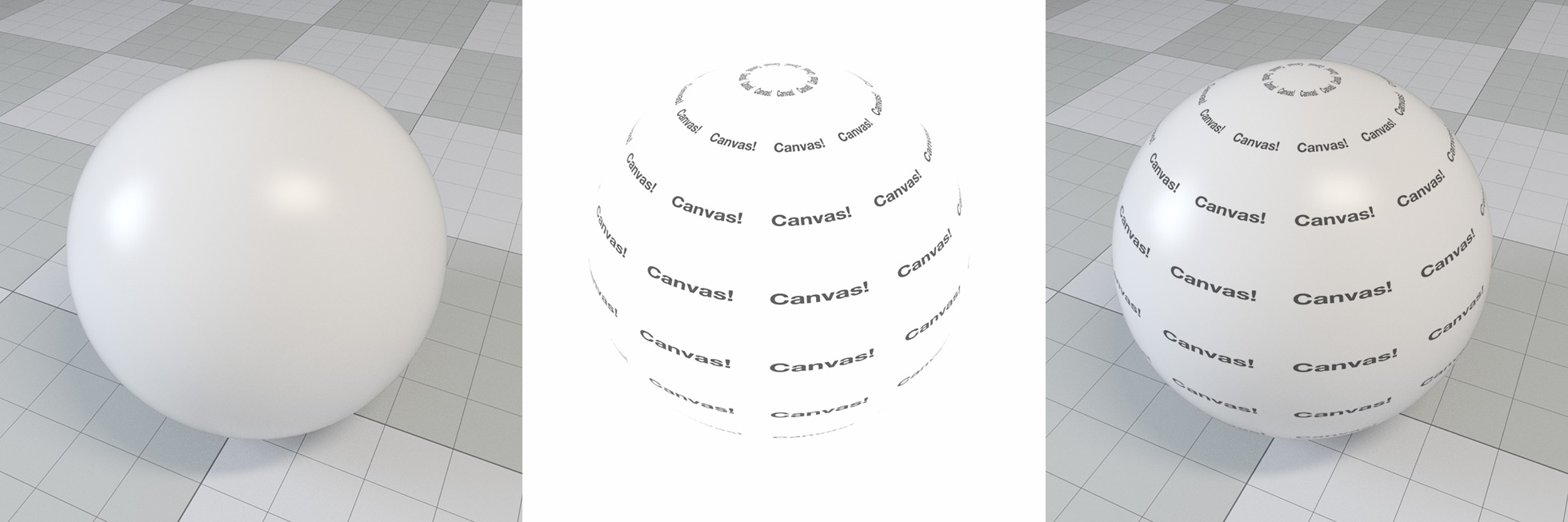

There are quite a few use cases for this. In the above example we are rendering a photorealistic image on the left while in the middle we are just rendering the BSDF Weight canvas, with a custom texture with some text on it which can be produced much more quickly. By using the canvas combiner we can multiply these two canvases to get the result on the right without re-rendering the more expensive photorealistic image. This can be used for personalisation applications such as where users can upload their own content to show on products. While a V8 JavaScript implementation we had was taking around 30ms for a typical image the combine_canvases command took around 3ms, so it can give a significant speedup.

You may not have experimented with it yet but using JavaScript type arrays you can create some fairly efficient image processing in JavaScript V8 commands. One pain point however was that there was no way to directly get a named canvas as a JavaScript Canvas object. The get_canvas command has now been extended with an encode parameter which is on by default to preserve the current behaviour, however if you set it to false, get_canvas will instead return the actual canvas. This is not of any value in JSON-RPC however in V8 that allows you to fetch any named canvas into a JavaScript Canvas object.

Many users who use AWS services from within EC2 instances take advantage of the fact that AWS SDK based tools can retrieve their credentials directly from EC2 instance metadata rather than specifying them explicitly in configuration files. This is more secure and much more flexible so we have extended the AWS SQS and S3 features used with the Queue Manager functionality to now allow for automatic credential fetching if you are running on an EC2 instance. To use it you just omit the credential configuration and RealityServer will automatically try and fetch them. Note that you need to ensure you assign an IAM role to your EC2 instance which has permission to access to the services you want to use.

The RS.Stream.update_camera method in the client library now supports wait_for_render semantics. If this property in the data object is set to true then the returned Promise will not resolve until the camera change is available in a rendered image. One common problem you could have when implementing a RealityServer application is when making a change you could briefly get an updated image of the scene in which the change was not reflected. This allows you to ensure the changes you have made are actually present in the image you are showing.

More conveniences for the you DevOps types, you can now add echo statements of your RealityServer configuration files which can be great for debugging configuration issues and problems. You can try it by adding something like this to your realityserver.conf file. Messages are logged to stderr since the full logging system has not started at the time the configuration files are parsed.

echo Hello World!

As was announced with the final release of RealityServer 5.3, SPM licensing is no longer available in RealityServer 6.0 and we have moved over fully to RLM licensing for floating and node-locked licenses and our own Cloud license system for usage based tracking. You can read more about all of the licensing options on the RealityServer Licensing page.

This is a huge release, however there are many changes we made here which lay the groundwork for some great new features in the future. Just like previous versions we will be releasing several incremental releases in the coming months with both new features and fixes. Be sure to let us know if you have features you’d really like to see and of course we’d love to hear about how you are using RealityServer 6.0.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.