We have just released RealityServer 4.4 which includes the new NVIDIA Iray 2016.0. We will periodically release updated versions as new improvements and Iray updates become available. We’ll cover some of the highlights of this release here but users are strongly encouraged to read both the RealityServer release notes (relnotes.txt) and the Iray releases notes (neurayrelnotes.pdf) provided with the release. Let’s take a look at those new features, some of which many of our users have been asking about for some time.

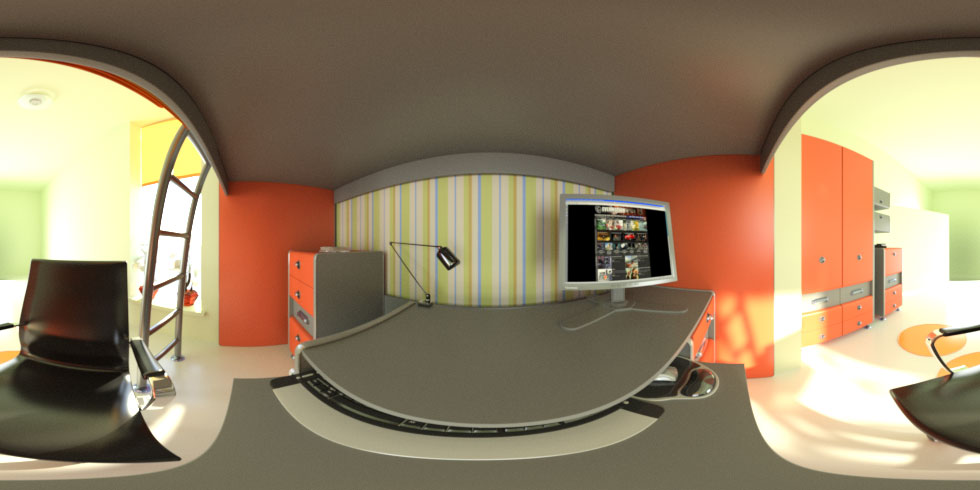

Iray 2016.0 introduces a new concept of lens distortions. This functionality allows for several interesting effects, including simulating the lenses of very specific cameras (extremely useful for photomontage). However the option of most interest to RealityServer customers is likely to be the ability to render spherical images using these distortions. This allows you to create panoramic imagery with a single render. Previously several of our customers have used the workaround of rendering six 90 degree field of view images and creating a cube map. While this works it can sometimes result in visible seams. Rendering with a spherical lens distortion gives perfect results. To activate you just need to set the mip_lens_distortion_type attribute on your scenes camera to “spherical”. You’ll want to render with an aspect ratio of 2:1 for best results.

Spherical panorama rendered with RealityServer 4.4

A new command, generate_mesh has been added to the RealityServer JSON-RPC web services API. Previously, the only way to get geometry into RealityServer was to have it on your servers filesystem and load it with one of the available importers (e.g., OBJ or .mi). Now you can feed mesh data to RealityServer directly through web services. This allows you to build logic outside RealityServer to generate geometry (for example if you have an application that generates a room shape based on an outline) and then pass it in dynamically. There is a very wide range of potential uses for this command and while customers have always been able to build server-side C++ plugins to expose this functionality we are now pleased to include it as an out of the box feature.

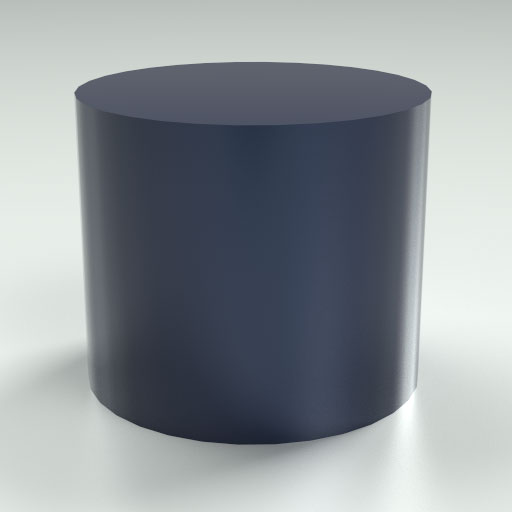

Using this new feature you can now create scenes without every loading geometry from disk. The image to the right was created using a server-side JavaScript command that calls the new generate_box command (described below) which in turn uses the new generate_mesh command to make the actual geometry. The script also assigns materials, creates a light, setups the camera and a clipping plane to cut out the front wall. All of this is then exposed as a single command to the client which can just call something similar to generate_room with the parameters for the size of the room and the table.

Note: You may need to increase the size http_post_body_limit setting in your realityserver.conf configuration if you plan to pass large amounts of data to this command. If you have extremely large datasets it may still be more efficient to put them into .mi or OBJ format and get them to the servers filesystem.

One of the great things about having the new generate_mesh command is that in addition to being able to be called through the web services API, it can also be directly called from the server-side JavaScript API. What this lets you do is quickly author geometry generating scripts in JavaScript which run on the server-side and expose parameterised commands to the web services API. If you have to create procedural or parametric geometry this can greatly reduce the amount of data you need to send down the wire since you can just send the parameters to the command and let it do the work on the server. We’ve included two simple generators in the javascript_services directory of your installation, generate_box and generate_extrusion. Full JavaScript source code is provided, so you can use these as an guide for your own.

//# name = generate_cylinder

//# description = Creates an extruded solid from a closed 2D polygon.

//# argument_descriptions = [ { "name": "name", "type" : "String", "description" : "The name of the object to create." } ]

//# argument_descriptions = [ { "name": "radius", "type" : "Float32", "default": 1.0, "description" : "Radius of the cylinder." } ]

//# argument_descriptions = [ { "name": "height", "type" : "Float32", "default": 0.0, "description" : "Height of the cylinder." } ]

//# argument_descriptions = [ { "name": "sides", "type" : "Sint32", "default": 16, "description" : "Number of sides to give the cylinder." } ]

//# return_type = void

// this is the structure for our mesh

var mesh = {

"vectors" : {

"points" : [],

"normals" : []

},

"vertices" : [],

"polygons" : []

};

// keep separate arrays for top and bottom polygons

var bottom = [];

var top = [];

for (var i = 0; i < sides; i++) {

var r = i / sides * Math.PI * 2.0; // radians for our angle

var c = Math.cos(r) * radius; // the x component

var s = Math.sin(r) * radius; // the y component

var b = { x: c, y: s, z: 0.0 }; // bottom vertex

var t = { x: c, y: s, z: height }; // top vertex

var n = { x: c, y: s, z: 0.0 }; // normal vector

var nl = Math.sqrt((n.x*n.x) + (n.y*n.y) + (n.z*n.z)); // normal length

n.x = n.x / nl; n.y = n.y / nl; n.z = n.z / nl; // normalize the normal

mesh.vectors.points[i] = b; // bottom ring for side polygons

mesh.vectors.points[i+sides] = t; // top ring for side polygons

mesh.vectors.points[i+(sides*2)] = t; // top cap

mesh.vectors.points[i+(sides*3)] = b; // bottom cap

mesh.vectors.normals[i] = n; // side normals

mesh.vertices[i] = { v: i, n: i }; // bottom ring vertex

mesh.vertices[i+sides] = { v: i+sides, n: i }; // top ring vertex

mesh.vertices[i+(sides*2)] = { v: i+(sides*2), n: sides }; // bottom cap vertex

mesh.vertices[i+(sides*3)] = { v: i+(sides*3), n: sides+1 }; // bottom cap vertex

mesh.polygons.push([(i+1) % sides, i, i+sides, ((i+1) % sides) + sides]); // side polygon

bottom.push(i+(sides*2)); // bottom polygon

top.push(i+(sides*3)); // top polygon

}

mesh.vectors.normals.push({ x:0.0, y:0.0, z:-1.0 }) // bottom normal

mesh.vectors.normals.push({ x:0.0, y:0.0, z:1.0 }) // top normal

mesh.polygons.push(bottom); // add the bottom polygon

mesh.polygons.push(top); // add the top polygon

// pass the mesh along to the appropriate command

NRS_Commands.generate_mesh({name: name, mesh: mesh});

Cylinder generator script (Radius 3.5)

Cylinder from generator script (Radius 0.25)

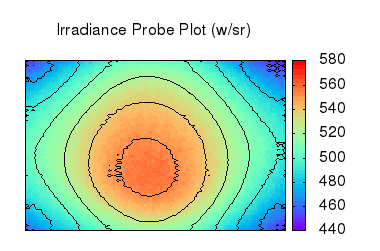

RealityServer has for some time included the Iray irradiance probes feature which lets you render an image containing irradiance (the amount of light arriving at a particular point) information for specific locations in the scene. While this all works well, the vast majority of the time when using this feature, rather than getting back an image your would ideally like to get back the actual values. We have now introduced a new special file format for the render command called “array”. When you specify this format, instead of returning binary image data the render command modifies its return type and sends back an array of values. Irradiance probe renders are very quick, so you can potentially setup many reading planes in your scene and compute them all at once.

The format of these values will depend in your selection for canvas_pixel_type, however to get back monochromatic irradiance values, you can specify “Float32” as your canvas_pixel_type. You will then get back a simple array of numbers. This new functionality makes it especially straight forward to build cloud based lighting analysis tools using RealityServer. To the right is a simple plot of a grid of irradiance probes and their resulting irradiance values. This type of material can now be produced programmatically without resorting to re-reading image data or performing conversions. You get the numbers you need right out of the render call and you can code whatever data visualisation you like.

Irradiance plot from numerical rendering

This initial release contains many bugfixes and improvements both in Iray and RealityServer itself. In particular several enhancements have been made to Iray memory handling which could help you if you were one of those who found yourself frequently running out of GPU memory or encountering other strange GPU related issues. Speed and quality enhancements have also been made in several places, however these can be quite scene specific so you may not notice this on all scenes. We also added a remove_render_context command which can be useful if you need to programmatically release a render context you created. We are working on many other great enhancements for RealityServer 4.4 which we will roll out in incremental releases. We hope you find these new features useful, but as always be sure to contact us if you have any ideas or questions.

Paul Arden has worked in the Computer Graphics industry for over 20 years, co-founding the architectural visualisation practice Luminova out of university before moving to mental images and NVIDIA to manage the Cloud-based rendering solution, RealityServer, now managed by migenius where Paul serves as CEO.